Dec 24, 2013

Case hand prosthesis with sense of touch allows amputees to feel

Via Medgadget

There have been a few attempts at simulating a sense of touch in prosthetic hands, but a recently released video from Case Western Reserve University demonstrates newly developed haptic technology that looks convincingly impressive. Here’s a video of an amputee wearing a prosthetic hand with a sensor on the forefinger, while blindfolded and wearing headphones that block any hearing, pulling stems off of cherries. The first part of the video shows him doing it with the sensor turned off and then when it’s activated.

For a picture of the electrode technology, please visit:http://www.flickr.com/photos/tylerlab/10075384624/

20:56 Posted in Neurotechnology & neuroinformatics | Permalink | Comments (0)

NeuroOn mask improves sleep and helps manage jet lag

Via Medgadget

A group of Polish engineers is working on a smart sleeping mask that they hope will allow people to get more out of their resting time, as well as allow for unusual sleeping schedules that would particularly benefit those who are often on-call. The NeuroOn mask will have an embedded EEG for brain wave monitoring, EMG for detecting muscle motion on the face, and sensors that can track whether your pupils are moving and whether they are going through REM. The team is currently raising money on Kickstarter where you can pre-order your own NeuroOn once it’s developed into a final product.

The Creative Link: Investigating the Relationship Between Social Network Indices, Creative Performance and Flow in Blended Teams

The Creative Link: Investigating the Relationship Between Social Network Indices, Creative Performance and Flow in Blended Teams

Andrea Gaggioli, Elvis Mazzoni, Luca Milani, Giuseppe Riva

This study presents findings of an exploratory study, which has investigated the relationship between indices of social network structure, flow and creative performance in students collaborating in blended setting. Thirty undergraduate students enrolled in a Media Psychology course were included in five groups, which were tasked with designing a new technology-based psychological application. Team members collaborated over a twelve-week period using two main modalities: face-to-face meeting sessions in the classroom (once a week) and virtually using a groupware tool. Social network indicators of group interaction and presence indices were extracted from communication logs, whereas flow and product creativity were assessed through survey measures. Findings showed that specific social network indices (in particular those measuring decentralization and neighbor interaction) were positively related with flow experience. More broadly, results indicated that selected social network indicators can offer useful insight into the creative collaboration process. Theoretical and methodological implications of these results are drawn.

20:36 Posted in Creativity and computers, Research tools | Permalink | Comments (0)

Evaluation of neurofeedback in ADHD: The long and winding road.

Evaluation of neurofeedback in ADHD: The long and winding road.

Biol Psychol. 2013 Dec 6;

Authors: Arns M, Heinrich H, Strehl U

Among the clinical applications of neurofeedback, most research has been conducted in ADHD. As an introduction a short overview of the general history of neurofeedback will be given, while the main part of the paper deals with a review of the current state of neurofeedback in ADHD. A meta-analysis on neurofeedback from 2009 found large effect sizes for inattention and impulsivity and medium effects sizes for hyperactivity. Since 2009 several new studies, including 4 placebo-controlled studies, have been published. These latest studies are reviewed and discussed in more detail. The review focuses on studies employing 1) semi-active, 2) active, and 3) placebo-control groups. The assessment of specificity of neurofeedback treatment in ADHD is discussed and it is concluded that standard protocols such as theta/beta, SMR and slow cortical potentials neurofeedback are well investigated and have demonstrated specificity. The paper ends with an outlook on future questions and tasks. It is concluded that future controlled clinical trials should, in a next step, focus on such known protocols, and be designed along the lines of learning theory.

20:32 Posted in Biofeedback & neurofeedback | Permalink | Comments (0)

Effectiveness and feasibility of virtual reality and gaming system use at home by older adults for enabling physical activity to improve health-related domains: a systematic review

Effectiveness and feasibility of virtual reality and gaming system use at home by older adults for enabling physical activity to improve health-related domains: a systematic review.

Miller KJ, Adair BS, Pearce AJ, Said CM, Ozanne E, Morris MM. Age Ageing. 2013 Dec 17. [Epub ahead of print]

BACKGROUND: use of virtual reality and commercial gaming systems (VR/gaming) at home by older adults is receiving attention as a means of enabling physical activity. OBJECTIVE: to summarise evidence for the effectiveness and feasibility of VR/gaming system utilisation by older adults at home for enabling physical activity to improve impairments, activity limitations or participation. METHODS: a systematic review searching 12 electronic databases from 1 January 2000-10 July 2012 using key search terms. Two independent reviewers screened yield articles using pre-determined selection criteria, extracted data using customised forms and applied the Cochrane Collaboration Risk of Bias Tool and the Downs and Black Checklist to rate study quality. RESULTS: fourteen studies investigating the effects of VR/gaming system use by healthy older adults and people with neurological conditions on activity limitations, body functions and physical impairments and cognitive and emotional well-being met the selection criteria. Study quality ratings were low and, therefore, evidence was not strong enough to conclude that interventions were effective. Feasibility was inconsistently reported in studies. Where feasibility was discussed, strong retention (≥70%) and adherence (≥64%) was reported. Initial assistance to use the technologies, and the need for monitoring exertion, aggravation of musculoskeletal symptoms and falls risk were reported. CONCLUSIONS: existing evidence to support the feasibility and effectiveness VR/gaming systems use by older adults at home to enable physical activity to address impairments, activity limitations and participation is weak with a high risk of bias. The findings of this review may inform future, more rigorous research.

20:29 Posted in Serious games, Virtual worlds | Permalink | Comments (0)

Dec 22, 2013

Cubli

Researchers at the Institute for Dynamic Systems and Control, ETH Zurich, Switzerland developed a small cube (cm 15X15X15) that can jump up and balance on its corner. Reaction wheels mounted on three faces of the cube rotate at high angular velocities and then brake suddenly, causing the Cubli to jump up. Once the Cubli has almost reached the corner stand up position, controlled motor torques are applied to make it balance on its corner. In addition to balancing, the motor torques can also be used to achieve a controlled fall such that the Cubli can be commanded to fall in any arbitrary direction. Combining these three abilities - jumping up, balancing, and controlled falling - the Cubli is able to 'walk'.

20:59 Posted in Blue sky | Permalink | Comments (0)

Dec 21, 2013

New Scientist: Mind-reading light helps you stay in the zone

Re-blogged from New Scientist

WITH a click of a mouse, I set a path through the mountains for drone #4. It's one of five fliers under my control, all now heading to different destinations. Routes set, their automation takes over and my mind eases, bringing a moment of calm. But the machine watching my brain notices the lull, decides I can handle more, and drops a new drone in the south-east corner of the map.

The software is keeping my brain in a state of full focus known as flow, or being "in the zone". Too little work, and the program notices my attention start to flag and gives me more drones to handle. If I start to become a frazzled air traffic controller, the computer takes one of the drones off my plate, usually without me even noticing.

The system monitors the workload by pulsing light into my prefrontal cortex 12 times a second. The amount of light that oxygenated and deoxygenated haemoglobin in the blood there absorbs and reflects gives an indication of how mentally engaged I am. Harder brain work calls for more oxygenated blood, and changes how the light is absorbed. Software interprets the signal from this functional near infrared spectroscopy (fNIRS) and uses it to assign me the right level of work.

Dan Afergan, who is running the study at Tufts University in Medford, Massachusetts, points to an on-screen readout as I play. "It's predicting high workload with very high certainty, and, yup, number three just dropped off," he says over my shoulder. Sure enough, I'm now controlling just five drones again.

To achieve this mind-monitoring, I'm hooked up to a bulky rig of fibre-optic cables and have an array of LEDs stuck to my forehead. The cables stream off my head into a box that converts light signals to electrical ones. These fNIRS systems don't have to be this big, though. A team led by Sophie Piper at Charité University of Medicine in Berlin, Germany, tested a portable device on cyclists in Berlin earlier this year – the first time fNIRS has been done during an outdoor activity.

Afergan doesn't plan to be confined to the lab for long either. He's studying ways to integrate brain-activity measuring into the Google Glass wearable computer. A lab down the hall already has a prototype fNIRS system on a chip that could, with a few improvements, be built into a Glass headset. "Glass is already on your forehead. It's really not much of a stretch to imagine building fNIRS into the headband," he says.

Afergan is working on a Glass navigation system for use in cars that responds to a driver's level of focus. When they are concentrating hard, Glass will show only basic instructions, or perhaps just give audio directions. When the driver is focusing less, on a straight stretch of road perhaps, Glass will provide more details of the route. The team also plans to adapt Google Now – the company's digital assistant software – for Glass so that it only gives you notifications when your mind has room for them.

Peering into drivers' minds will become increasingly important, says Erin Solovey, a computer scientist at Drexel University in Philadelphia, Pennsylvania. Many cars have automatic systems for adaptive cruise control, keeping in the right lane and parking. These can help, but they also bring the risk that drivers may not stay focused on the task at hand, because they are relying on the automation.

Systems using fNIRS could monitor a driver's focus and adjust the level of automation to keep drivers safely engaged with what the car is doing, she says.

This article appeared in print under the headline "Stay in the zone"

Effectiveness and feasibility of virtual reality and gaming system use at home by older adults

Effectiveness and feasibility of virtual reality and gaming system use at home by older adults for enabling physical activity to improve health-related domains: a systematic review.

Age Ageing. 2013 Dec 17; Authors: Miller KJ, Adair BS, Pearce AJ, Said CM, Ozanne E, Morris MM

BACKGROUND: use of virtual reality and commercial gaming systems (VR/gaming) at home by older adults is receiving attention as a means of enabling physical activity. OBJECTIVE: to summarise evidence for the effectiveness and feasibility of VR/gaming system utilisation by older adults at home for enabling physical activity to improve impairments, activity limitations or participation. METHODS: a systematic review searching 12 electronic databases from 1 January 2000-10 July 2012 using key search terms. Two independent reviewers screened yield articles using pre-determined selection criteria, extracted data using customised forms and applied the Cochrane Collaboration Risk of Bias Tool and the Downs and Black Checklist to rate study quality. RESULTS: fourteen studies investigating the effects of VR/gaming system use by healthy older adults and people with neurological conditions on activity limitations, body functions and physical impairments and cognitive and emotional well-being met the selection criteria. Study quality ratings were low and, therefore, evidence was not strong enough to conclude that interventions were effective. Feasibility was inconsistently reported in studies. Where feasibility was discussed, strong retention (≥70%) and adherence (≥64%) was reported. Initial assistance to use the technologies, and the need for monitoring exertion, aggravation of musculoskeletal symptoms and falls risk were reported. CONCLUSIONS: existing evidence to support the feasibility and effectiveness VR/gaming systems use by older adults at home to enable physical activity to address impairments, activity limitations and participation is weak with a high risk of bias. The findings of this review may inform future, more rigorous research.

20:11 Posted in Cybertherapy, Serious games, Virtual worlds | Permalink | Comments (0)

Happy Christmas from Positive Technology Journal

19:49 | Permalink | Comments (0)

Dec 19, 2013

Tricking the brain with transformative virtual reality

18:40 Posted in Telepresence & virtual presence, Virtual worlds | Permalink | Comments (0)

Dec 08, 2013

Real-Time fMRI Pattern Decoding and Neurofeedback Using FRIEND: An FSL-Integrated BCI Toolbox

Real-Time fMRI Pattern Decoding and Neurofeedback Using FRIEND: An FSL-Integrated BCI Toolbox.

PLoS One. 2013;8(12):e81658

Authors: Sato JR, Basilio R, Paiva FF, Garrido GJ, Bramati IE, Bado P, Tovar-Moll F, Zahn R, Moll J

Abstract. The demonstration that humans can learn to modulate their own brain activity based on feedback of neurophysiological signals opened up exciting opportunities for fundamental and applied neuroscience. Although EEG-based neurofeedback has been long employed both in experimental and clinical investigation, functional MRI (fMRI)-based neurofeedback emerged as a promising method, given its superior spatial resolution and ability to gauge deep cortical and subcortical brain regions. In combination with improved computational approaches, such as pattern recognition analysis (e.g., Support Vector Machines, SVM), fMRI neurofeedback and brain decoding represent key innovations in the field of neuromodulation and functional plasticity. Expansion in this field and its applications critically depend on the existence of freely available, integrated and user-friendly tools for the neuroimaging research community. Here, we introduce FRIEND, a graphic-oriented user-friendly interface package for fMRI neurofeedback and real-time multivoxel pattern decoding. The package integrates routines for image preprocessing in real-time, ROI-based feedback (single-ROI BOLD level and functional connectivity) and brain decoding-based feedback using SVM. FRIEND delivers an intuitive graphic interface with flexible processing pipelines involving optimized procedures embedding widely validated packages, such as FSL and libSVM. In addition, a user-defined visual neurofeedback module allows users to easily design and run fMRI neurofeedback experiments using ROI-based or multivariate classification approaches. FRIEND is open-source and free for non-commercial use. Processing tutorials and extensive documentation are available.

23:39 Posted in Biofeedback & neurofeedback, Neurotechnology & neuroinformatics | Permalink | Comments (0)

iMirror

Take back your mornings with the iMirror – the interactive mirror for your home. Watch the video for a live demo!

23:26 Posted in Augmented/mixed reality, Future interfaces | Permalink | Comments (0)

How to use mind-controlled robots in manufacturing, medicine

via KurzweilAI

University at Buffalo researchers are developing brain-computer interface (BCI) devices to mentally control robots.

“The technology has practical applications that we’re only beginning to explore,” said Thenkurussi “Kesh” Kesavadas, PhD, UB professor of mechanical and aerospace engineering and director of UB’s Virtual Reality Laboratory. “For example, it could help paraplegic patients to control assistive devices, or it could help factory workers perform advanced manufacturing tasks.”

Most BCI research has involved expensive, invasive BCI devices that are inserted into the brain, and used mostly to help disabled people.

UB research relies on a relatively inexpensive ($750), non-invasive external device (Emotiv EPOC). It reads EEG brain activity with 14 sensors and transmits the signal wirelessly to a computer, which then sends signals to the robot to control its movements.

Kesavadas recently demonstrated the technology with Pramod Chembrammel, a doctoral student in his lab. Chembrammel trained with the instrument for a few days, then used the device to control a robotic arm.

He used the arm to insert a wood peg into a hole and rotate the peg. “It was incredible to see the robot respond to my thoughts,” Chembrammel said. “It wasn’t even that difficult to learn how to use the device.”

The video (below) shows that a simple set of instructions can be combined to execute more complex robotic actions, Kesavadas said. Such robots could be used by factory workers to perform hands-free assembly of products, or carry out tasks like drilling or welding.

The potential advantage, Kesavadas said, is that BCI-controlled devices could reduce the tedium of performing repetitious tasks and improve worker safety and productivity. The devices can also leverage the worker’s decision-making skills, such as identifying a faulty part in an automated assembly line.

23:10 Posted in AI & robotics, Brain-computer interface | Permalink | Comments (0)

Dec 02, 2013

Meaning in life: An important factor for the psychological well-being of chronically ill patients?

Rehabilitation Psychology - Vol 55, Iss 3

Dezutter, Jessie; Casalin, Sara; Wachholtz, Amy; Luyckx, Koen; Hekking, Jessica; Vandewiele, Wim

Purpose: This study aimed to investigate 2 dimensions of meaning in life—Presence of Meaning (i.e., the perception of your life as significant, purposeful, and valuable) and Search for Meaning (i.e., the strength, intensity, and activity of people’s efforts to establish or increase their understanding of the meaning in their lives)—and their role for the well-being of chronically ill patients. Research design: A sample of 481 chronically ill patients (M = 50 years, SD = 7.26) completed measures on meaning in life, life satisfaction, optimism, and acceptance. We hypothesized that Presence of Meaning and Search for Meaning will have specific relations with all 3 aspects of well-being. Results: Cluster analysis was used to examine meaning in life profiles. Results supported 4 distinguishable profiles (High Presence High Search, Low Presence High Search, High Presence Low Search, and Low Presence Low Search) with specific patterns in relation to well-being and acceptance. Specifically, the 2 profiles in which meaning is present showed higher levels of well-being and acceptance, whereas the profiles in which meaning is absent are characterized by lower levels. Furthermore, the results provided some clarification on the nature of the Search for Meaning process by distinguishing between adaptive (the High Presence High Search cluster) and maladaptive (the Low Presence High Search cluster) searching for meaning in life. Conclusions: The present study provides an initial glimpse in how meaning in life may be related to the well-being of chronically ill patients and the acceptance of their condition. Clinical implications are discussed. (PsycINFO Database Record (c) 2013 APA, all rights reserved)

23:37 Posted in Technology & spirituality | Permalink | Comments (0)

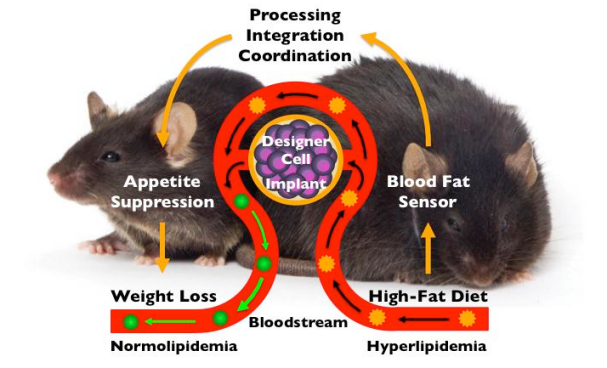

A genetically engineered weight-loss implant

Via KurzweilAI

ETH-Zurich biotechnologists have constructed an implantable genetic regulatory circuit that monitors blood-fat levels. In response to excessive levels, it produces a messenger substance that signals satiety (fullness) to the body. Tests on obese mice revealed that this helps them lose weight.

Genetically modified cells implanted in the body monitor the blood-fat level. If it is too high, they produce a satiety hormone. The animal stops eating and loses weight. (Credit: Martin Fussenegger / ETH Zurich / Jackson Lab)

23:25 Posted in Neurotechnology & neuroinformatics, Physiological Computing | Permalink | Comments (0)

Nov 29, 2013

Real-time Neurofeedback Using Functional MRI Could Improve Down-Regulation of Amygdala Activity During Emotional Stimulation

Real-time Neurofeedback Using Functional MRI Could Improve Down-Regulation of Amygdala Activity During Emotional Stimulation: A Proof-of-Concept Study.

Brain Topogr. 2013 Nov 16;

Authors: Brühl AB, Scherpiet S, Sulzer J, Stämpfli P, Seifritz E, Herwig U

Abstract. The amygdala is a central target of emotion regulation. It is overactive and dysregulated in affective and anxiety disorders and amygdala activity normalizes with successful therapy of the symptoms. However, a considerable percentage of patients do not reach remission within acceptable duration of treatment. The amygdala could therefore represent a promising target for real-time functional magnetic resonance imaging (rtfMRI) neurofeedback. rtfMRI neurofeedback directly improves the voluntary regulation of localized brain activity. At present, most rtfMRI neurofeedback studies have trained participants to increase activity of a target, i.e. up-regulation. However, in the case of the amygdala, down-regulation is supposedly more clinically relevant. Therefore, we developed a task that trained participants to down-regulate activity of the right amygdala while being confronted with amygdala stimulation, i.e. negative emotional faces. The activity in the functionally-defined region was used as online visual feedback in six healthy subjects instructed to minimize this signal using reality checking as emotion regulation strategy. Over a period of four training sessions, participants significantly increased down-regulation of the right amygdala compared to a passive viewing condition to control for habilitation effects. This result supports the concept of using rtfMRI neurofeedback training to control brain activity during relevant stimulation, specifically in the case of emotion, and has implications towards clinical treatment of emotional disorders.

00:06 Posted in Brain-computer interface | Permalink | Comments (0)

Nov 28, 2013

OutRun: Augmented Reality Driving Video Game

Garnet Hertz's video game concept car combines a car-shaped arcade game cabinet with a real world electric vehicle to produce a video game system that actually drives. OutRun offers a unique mixed reality simulation as one physically drives through an 8-bit video game. The windshield of the system features custom software that transforms the real world into an 8-bit video game, enabling the user to have limitless gameplay opportunities while driving. Hertz has designed OutRun to de-simulate the driving component of a video game: where game simulations strive to be increasingly realistic (usually focused on graphics), this system pursues "real" driving through the game. Additionally, playing off the game-like experience one can have driving with an automobile navigation system, OutRun explores the consequences of using only a computer model of the world as a navigation tool for driving.

More info: http://conceptlab.com/outrun/

23:55 Posted in Augmented/mixed reality | Permalink | Comments (0)

Effect of mindfulness meditation on brain-computer interface performance

Effect of mindfulness meditation on brain-computer interface performance.

Conscious Cogn. 2013 Nov 22;23C:12-21

Authors: Tan LF, Dienes Z, Jansari A, Goh SY

Abstract. Electroencephalogram based Brain-Computer Interfaces (BCIs) enable stroke and motor neuron disease patients to communicate and control devices. Mindfulness meditation has been claimed to enhance metacognitive regulation. The current study explores whether mindfulness meditation training can thus improve the performance of BCI users. To eliminate the possibility of expectation of improvement influencing the results, we introduced a music training condition. A norming study found that both meditation and music interventions elicited clear expectations for improvement on the BCI task, with the strength of expectation being closely matched. In the main 12week intervention study, seventy-six healthy volunteers were randomly assigned to three groups: a meditation training group; a music training group; and a no treatment control group. The mindfulness meditation training group obtained a significantly higher BCI accuracy compared to both the music training and no-treatment control groups after the intervention, indicating effects of meditation above and beyond expectancy effects.

23:40 Posted in Brain-computer interface, Mental practice & mental simulation | Permalink | Comments (0)

Nov 24, 2013

How robots will change the world

Great BBC documentary (40')

23:51 Posted in AI & robotics | Permalink | Comments (0)

Call for papers IDGEI 2014 - International Workshop on Intelligent Games for Empowerment and Inclusion

Call for papers IDGEI 2014 - International Workshop on Intelligent Games for Empowerment and Inclusion - associated with "Intelligent User Interfaces IUI 2014"

2nd International Workshop on Intelligent Digital Games for Empowerment and Inclusion.

Digital Games for Empowerment and Inclusion possess the potential to change our society in a most positive way by preparing selected groups in a playful and fun way for their everyday life’s social and special situations. Exemplary domains span as far as from children with Autism Spectrum Condition to young adults preparing for their first job interviews or migrants familiarizing with their new environment. The current generation of such games thereby increasingly demands for computational intelligence algorithms to help analyze players’ behavior and monitor their motivation and interest to adapt game progress. The development of such games usually thus requires expertise from the general gaming domain, but in particular also from a game’s target domain, besides technological savoir-faire to provide intelligent analysis and reaction solutions. IDGEI 2014 aims at bridging across these communities and disciplines by inviting respective researchers and experts to discuss their latest perspectives and findings in the field of Intelligent Digital Games for Empowerment and Inclusion.

Suggested workshop topics include, but are by no means limited to:

- Machine Intelligence in Serious Games

- Mobile and Real-World Serious Gaming

- Emotion & Affect in Serious Games

- Player Behavior and Attention Modeling

- Player-Adaptation and Motivation

- Security & Privacy Preservation

- Novel Serious Games

- User Studies & Tests of Serious Games

Paper submission deadline 4 December 2013.

Associated with International Conference on Intelligent User Interfaces 2014.

For more info, download Call for Papers (PDF; 582 KB)

19:23 Posted in Call for papers, Positive Technology events, Serious games | Permalink | Comments (0)