Feb 28, 2017

Ping-Pong Robot

Developed by Omron Corporation, FORPHEUS (Future Omron Robotics Technology for Exploring Possibility of Harmonized aUtomation with Sinic Theoretics) has officially been given the Guinness World Records title for being the First robot table tennis tutor for its unique technological intelligence and educational capabilities.

According to the project's lead developer Taku Oya, the goal of FORPHEUS was to harmonise humans and robots, by way of teaching the game of table tennis to human players.

The machine is easily able to act as a coach thanks to cutting edge vision and motion sensors it can use to gage movement during a match. FORPHEUS also features an array of cameras that are situated above the ping pong table which monitors the position of the ball at an impressive rate of 80 times per second. This functionality also allows the robot to show its human student to see a projected image as to where the return ball will land so that they may improve their skills.

23:02 Posted in AI & robotics | Permalink | Comments (0)

Jun 21, 2016

New book on Human Computer Confluence - FREE PDF!

Two good news for Positive Technology followers.

1) Our new book on Human Computer Confluence is out!

2) It can be downloaded for free here

Human-computer confluence refers to an invisible, implicit, embodied or even implanted interaction between humans and system components. New classes of user interfaces are emerging that make use of several sensors and are able to adapt their physical properties to the current situational context of users.

A key aspect of human-computer confluence is its potential for transforming human experience in the sense of bending, breaking and blending the barriers between the real, the virtual and the augmented, to allow users to experience their body and their world in new ways. Research on Presence, Embodiment and Brain-Computer Interface is already exploring these boundaries and asking questions such as: Can we seamlessly move between the virtual and the real? Can we assimilate fundamentally new senses through confluence?

The aim of this book is to explore the boundaries and intersections of the multidisciplinary field of HCC and discuss its potential applications in different domains, including healthcare, education, training and even arts.

DOWNLOAD THE FULL BOOK HERE AS OPEN ACCESS

Please cite as follows:

Andrea Gaggioli, Alois Ferscha, Giuseppe Riva, Stephen Dunne, Isabell Viaud-Delmon (2016). Human computer confluence: transforming human experience through symbiotic technologies. Warsaw: De Gruyter. ISBN 9783110471120.

09:53 Posted in AI & robotics, Augmented/mixed reality, Biofeedback & neurofeedback, Blue sky, Brain training & cognitive enhancement, Brain-computer interface, Cognitive Informatics, Cyberart, Cybertherapy, Emotional computing, Enactive interfaces, Future interfaces, ICT and complexity, Neurotechnology & neuroinformatics, Positive Technology events, Research tools, Self-Tracking, Serious games, Technology & spirituality, Telepresence & virtual presence, Virtual worlds, Wearable & mobile | Permalink

May 24, 2016

Computational Personality?

The field of artificial intelligence (AI) has undergone a dramatic evolution in the last years. The impressive advances in this field have inspired several leaders in the scientific and technological community - including Stephen Hawking and Elon Musk - to raise concerns about a potential domination of machines over humans.

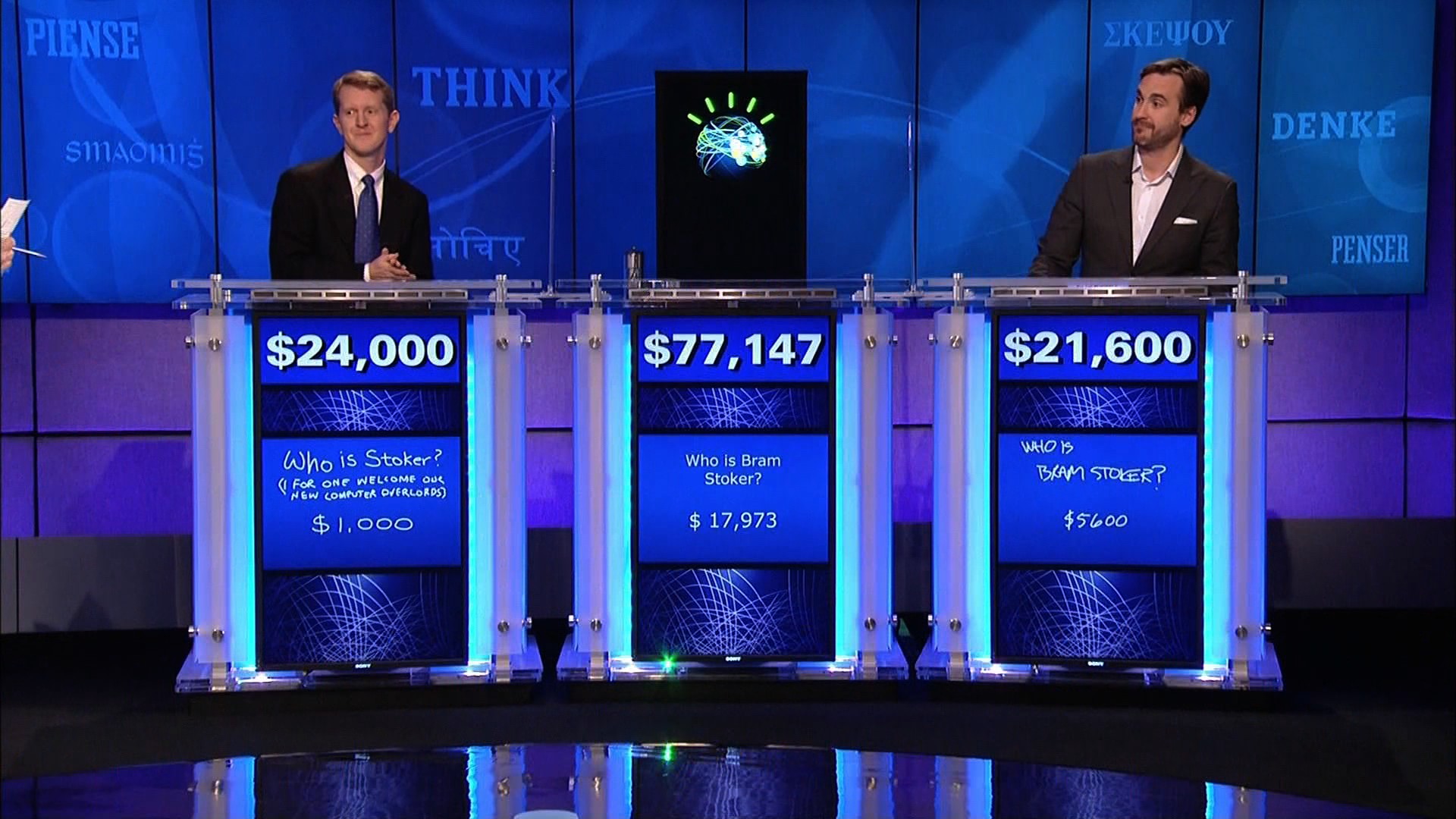

While many people still think about AI as robots with human-like characteristics, this field is much broader and include a number of diverse tools and applications, from SIRI to self-driving cars, to autonomous weapons. Among the key innovations in the AI field, IBM’s Watson computer system is certainly one of the most popular.

Developed within IBM’s DeepQA project lead by principal investigator David Ferrucci, Watson allows answering questions addressed in natural language, but also features advanced cognitive abilities such as information retrieval, knowledge representation, automatic reasoning, and “open domain question answering”.

Thanks to these advanced functions, Watson could compete at the human champion level in real time on the American TV quiz show, Jeopardy. This impressive result has opened several potential business applications of so-called “cognitive computing”, i.e. targeting big data analytics problems in health, pharma, and other business sectors. But psychology, too, may be one of the next frontier of the cognitive computing revolution.

For example, Watson Personality Insight is a service designed to automatically-generate psychological profiles on the basis of unstructured text extracted from mails, tweets, blog posts, articles and forums. In addition to a description of your personality, needs and values, the program provides an automated analysis of “Big Five” personality traits: openness, conscientiousness, extroversion, agreeableness, and neuroticism; all these data can then be visualized in a graphic representation. According to IBM’s documentation, to give a reliable estimate of personality, the Watson program requires at least 3,500 words, but preferably 6,000 words. Furthermore, the content of the text should ideally reflects personal experiences, thoughts and responses. The psychological model behind the service is based on studies showing that frequency with which we use certain categories of words can provide clues to personality, thinking style, social connections, and emotional stress variations.

Clearly, many psychologists (and non-psychologists, too) may have several doubts about the reliability and accuracy of this service. Furthermore, for some people, collecting social media data to identify psychological traits may lead to Orwellian scenarios. Although these concerns are understandable, they may be mitigated by the important positive applications and benefits that this technology may bring about for individuals, organizations and society.

11:44 Posted in AI & robotics, Big Data, Blue sky, Computational psychology | Permalink | Comments (0)

Apr 27, 2016

Predictive Technologies: Can Smart Tools Augment the Brain's Predictive Abilities?

Apr 05, 2015

Are you concerned about AI?

Recently, a growing number of opinion leaders have started to point out the potential risks associated to the rapid advancement of Artificial Intelligence. This shared concern has led an interdisciplinary group of scientists, technologists and entrepreneurs to sign an open letter (http://futureoflife.org/misc/open_letter/), drafted by the Future of Life Institute, which focuses on priorities to be considered as Artificial Intelligence develops as well as on the potential dangers posed by this paradigm.

The concern that machines may soon dominate humans, however, is not new: in the last thirty years, this topic has been widely represented in movies (i.e. Terminator, the Matrix), novels and various interactive arts. For example, australian-based performance artist Stelarc has incorporated themes of cyborgization and other human-machine interfaces in his work, by creating a number of installations that confront us with the question of where human ends and technology begins.

In his 2005 well-received book “The Singularity Is Near: When Humans Transcend Biology” (Viking Penguin: New York), inventor and futurist Ray Kurzweil argued that Artificial Intelligence is one of the interacting forces that, together with genetics, robotic and nanotechnology, may soon converge to overcome our biological limitations and usher in the beginning of the Singularity, during which Kurzweil predicts that human life will be irreversibly transformed. According to Kurzweil, will take place around 2045 and will probably represent the most extraordinary event in all of human history.

Ray Kurzweil’s vision of the future of intelligence is at the forefront of the transhumanist movement, which considers scientific and technological advances as a mean to augment human physical and cognitive abilities, with the final aim of improving and even extending life. According to transhumanists, however, the choice whether to benefit from such enhancement options should generally reside with the individual. The concept of transhumanism has been criticized, among others, by the influential american philosopher of technology, Don Ihde, who pointed out that no technology will ever be completely internalized, since any technological enhancement implies a compromise. Ihde has distinguished four different relations that humans can have with technological artifacts. In particular, in the “embodiment relation” a technology becomes (quasi)transparent, allowing a partial symbiosis of ourself and the technology. In wearing of eyeglasses, as Ihde examplifies, I do not look “at” them but “through” them at the world: they are already assimilated into my body schema, withdrawing from my perceiving.

According to Ihde, there is a doubled desire which arises from such embodiment relations: “It is the doubled desire that, on one side, is a wish for total transparency, total embodiment, for the technology to truly "become me."(...) But that is only one side of the desire. The other side is the desire to have the power, the transformation that the technology makes available. Only by using the technology is my bodily power enhanced and magnified by speed, through distance, or by any of the other ways in which technologies change my capacities. (…) The desire is, at best, contradictory. l want the transformation that the technology allows, but I want it in such a way that I am basically unaware of its presence. I want it in such a way that it becomes me. Such a desire both secretly rejects what technologies are and overlooks the transformational effects which are necessarily tied to human-technology relations. This lllusory desire belongs equally to pro- and anti-technology interpretations of technology.” (Ihde, D. (1990). Technology and the Lifeworld: From Garden to Earth. Bloomington: Indiana, p. 75).

Despite the different philosophical stances and assumptions on what our future relationship with technology will look like, there is little doubt that these questions will become more pressing and acute in the next years. In my personal view, technology should not be viewed as mean to replace human life, but as an instrument for improving it. As William S. Haney II suggests in his book “Cyberculture, Cyborgs and Science Fiction: Consciousness and the Posthuman” (Rodopi: Amsterdam, 2006), “each person must choose for him or herself between the technological extension of physical experience through mind, body and world on the one hand, and the natural powers of human consciousness on the other as a means to realize their ultimate vision.” (ix, Preface).

23:26 Posted in AI & robotics, Blue sky, ICT and complexity | Permalink | Comments (0)

Nov 01, 2014

SoftBank's humanoid robot lands job as Nescafe salesman

(Reuters)

Nestle SA will enlist a thousand humanoid robots to help sell its coffee makers at electronics stores across Japan, becoming the first corporate customer for the chatty, bug-eyed androids unveiled in June by tech conglomerate SoftBank Corp.

Nestle has maintained healthy growth in Japan while many of its big markets are slowing, crediting a tradition of trying out off-beat marketing tactics in what is a small but profitable territory for the world's biggest food group.

The waist-high robot, developed by a French company and manufactured in Taiwan, was touted by Japan's SoftBank as capable of learning and expressing human emotions, and of serving as a companion or guide in a country that faces chronic labor shortages.

Nestle said on Wednesday it would initially commission 20 of the robots, called Pepper, in December to interact with customers and promote its coffee machines. By the end of next year, the maker of Nescafe coffee and KitKat chocolate bars plans to have the robots working at 1,000 stores.

"We hope this new type of made-in-Japan customer service will take off around the world," Nestle Japan President Kohzoh Takaoka said in a statement.

Nestle did not say how much it was paying for Pepper, which SoftBank has said would retail for 198,000 yen ($1,830). The robot is already greeting customers at more than 70 SoftBank mobile phone stores in Japan.

Among Nestle's most successful Japan-only initiatives is the Nescafe Ambassador system, in which individuals stock coffee pods and collect money for them at their offices in exchange for free use of machines and other perks. Nestle wants half a million "ambassadors" by 2020 - nearly quadruple the number now - as it expands into museums, beauty salons and even temples.

The Japanese unit has also developed hundreds of KitKat flavors including wasabi and green tea, and this year rolled out a KitKat that can be baked into cookies.

The latest creation from Aldebaran, Pepper is the first robot designed to live with humans.

21:28 Posted in AI & robotics | Permalink | Comments (0)

Oct 12, 2014

MIT Robotic Cheetah

MIT researchers have developed an algorithm for bounding that they've successfully implemented in a robotic cheetah. (Learn more: http://mitsha.re/1uHoltW)

I am not that impressed by the result though.

22:20 Posted in AI & robotics | Permalink | Comments (0)

Aug 03, 2014

JIBO: The World's First Family Robot

Jibo is a new robot from MIT roboticist Cynthia Breazeal. It is designed to be a social robot that you interact with like it’s another person in your home. The 28-centimetre, 3-kilogram “sociable robot” snaps family photos, handles video calling and acts as a digital concierge. Connected wirelessly to the Internet, Jibo sifts through messages, organizes your itinerary and orders takeout.

What people say about Jibo:

"JIBO's potential extends far beyond engaging in casual conversation and completing daily tasks." - Katie Couric, Yahoo News

"A Robot with a Little Humanity" - John Markoff, New York Times

"JIBO isn't an appliance, it's a companion, one that can interact and react with its human owners in ways that delight instead of disturb." - Lance Ulanoff, Mashable

"Move over, Siri, the JIBO robot is coming" - Maggie Lake, CNN

"This Friendly Robot Could One Day Be Your Family's Personal Assistant" - Christina Bonnington, WIRED

22:51 Posted in AI & robotics | Permalink | Comments (0)

Jul 29, 2014

Ekso bionic suit

Ekso is an exoskeleton bionic suit or a "wearable robot" designed to enable individuals with lower extremity paralysis to stand up and walk over ground with a weight bearing, four point reciprocal gait. Walking is achieved by the user’s forward lateral weight shift to initiate a step. Battery-powered motors drive the legs and replace neuromuscular function.

Ekso Bionics http://eksobionics.com/

14:59 Posted in AI & robotics, Cybertherapy, Wearable & mobile | Permalink | Comments (0)

Dec 08, 2013

How to use mind-controlled robots in manufacturing, medicine

via KurzweilAI

University at Buffalo researchers are developing brain-computer interface (BCI) devices to mentally control robots.

“The technology has practical applications that we’re only beginning to explore,” said Thenkurussi “Kesh” Kesavadas, PhD, UB professor of mechanical and aerospace engineering and director of UB’s Virtual Reality Laboratory. “For example, it could help paraplegic patients to control assistive devices, or it could help factory workers perform advanced manufacturing tasks.”

Most BCI research has involved expensive, invasive BCI devices that are inserted into the brain, and used mostly to help disabled people.

UB research relies on a relatively inexpensive ($750), non-invasive external device (Emotiv EPOC). It reads EEG brain activity with 14 sensors and transmits the signal wirelessly to a computer, which then sends signals to the robot to control its movements.

Kesavadas recently demonstrated the technology with Pramod Chembrammel, a doctoral student in his lab. Chembrammel trained with the instrument for a few days, then used the device to control a robotic arm.

He used the arm to insert a wood peg into a hole and rotate the peg. “It was incredible to see the robot respond to my thoughts,” Chembrammel said. “It wasn’t even that difficult to learn how to use the device.”

The video (below) shows that a simple set of instructions can be combined to execute more complex robotic actions, Kesavadas said. Such robots could be used by factory workers to perform hands-free assembly of products, or carry out tasks like drilling or welding.

The potential advantage, Kesavadas said, is that BCI-controlled devices could reduce the tedium of performing repetitious tasks and improve worker safety and productivity. The devices can also leverage the worker’s decision-making skills, such as identifying a faulty part in an automated assembly line.

23:10 Posted in AI & robotics, Brain-computer interface | Permalink | Comments (0)

Nov 24, 2013

How robots will change the world

Great BBC documentary (40')

23:51 Posted in AI & robotics | Permalink | Comments (0)

Apr 05, 2013

Humanoid Robots Being Studied for Autism Therapy

Via MedGadget

Researchers at Vanderbilt University are studying the potential benefits of using human-looking robots as tools to help kids with autism spectrum disorder (ASD) improve their communication skills. The programmable NAO robot used in the study was developed by Aldebaran Robotics out of Paris, France, and offers the ability to be part of a larger, smarter system.

Though a child might feel like the pink eyed humanoid is an autonomous being, the NAO robot that the team is using is actually hooked up to computers and external cameras that track the kid’s movements. Using the newly developed ARIA (Adaptive Robot-Mediated Intervention Architecture) protocol, they found that children paid more attention to NAO and followed in exercises almost as well as with a human adult therapist.

15:21 Posted in AI & robotics, Cybertherapy | Permalink | Comments (0)

Mar 03, 2013

Permanently implanted neuromuscolar electrodes allow natural control of a robotic prosthesis

Source: Chalmers University of Technology

“The new technology is a major breakthrough that has many advantages over current technology, which provides very limited functionality to patients with missing limbs,” Brånemark says.

Presently, robotic prostheses rely on electrodes over the skin to pick up the muscles electrical activity to drive few actions by the prosthesis. The problem with this approach is that normally only two functions are regained out of the tens of different movements an able-body is capable of. By using implanted electrodes, more signals can be retrieved, and therefore control of more movements is possible. Furthermore, it is also possible to provide the patient with natural perception, or “feeling”, through neural stimulation.

“We believe that implanted electrodes, together with a long-term stable human-machine interface provided by the osseointegrated implant, is a breakthrough that will pave the way for a new era in limb replacement,” says Rickard Brånemark.

Read full story

14:39 Posted in AI & robotics, Neurotechnology & neuroinformatics | Permalink | Comments (0)

Kibo space robot underwent zero gravity testing

Via Gizmag

The Japanese communication robot destined to join the crew aboard the International Space Station (ISS) this summer recently underwent some zero gravity testing. The Kibo Robot Project, organized by Dentsu Inc. in response to a proposal made by the Japan Aerospace Exploration Agency, unveiled the final design of its diminutive humanoid robot and its Earthbound counterpart.

Watch the video:

14:29 Posted in AI & robotics | Permalink | Comments (0)

Oct 27, 2012

DARPA Robotics Challenge

Via KurzweilAI

DARPA has announced the start of the next DARPA Robotics Challenge. This time, the goal is to develop ground robots that perform complex tasks in "dangerous, degraded human-engineered environments". That means robots that perform humanitarian, disaster relief operations. The robots must use standard human hand tools and vehicles to navigate a debris field, open doors, climb ladders, and break through a concrete wall. Most but not all of the robots will be humanoid in design.

The challenge is divided into two parts with a Virtual Robotics Challenge scheduled for 10 - 24 June, 2013 to test simulated robots and the actual DARPA Robotics Challenge scheduled for 21 December, 2013. DARPA has adopted the free software Gazebo simulator, which supports ROS. There are two competition "tracks" - competitors in Track A will develop their own humanoid robot and control software, while competitors in Track B will develop control software that runs on a DARPA-supplied Atlas robot built by Boston Dynamics. Already University teams are making announcements of participation. Read on for more info about some of the teams, as well as some awesome photos and videos of the robots in action.

13:29 Posted in AI & robotics, Research institutions & funding opportunities | Permalink | Comments (0)

May 07, 2012

Mind-controlled robot allows a quadriplegic patient moving virtually in space

Researchers at Federal Institute of Technology in Lausanne, Switzerland (EPFL), have successfully demonstrated a robot controlled by the mind of a partially quadriplegic patient in a hospital 62 miles away. The EPFL brain-computer interface system does not require invasive neural implants in the brain, since it is based on a special EEG cap fitted with electrodes that record the patient’s neural signals. The task of the patient is to imagine moving his paralyzed fingers, and this input is than translated by the BCI system into command for the robot.

16:06 Posted in AI & robotics, Brain-computer interface, Telepresence & virtual presence | Permalink | Comments (0)

Nov 26, 2011

Curiosity (did not) kill the cat

Today at 10:02 am the latest Mars Rover, Curiosity was launched into the deep space. The $2.5 billion exploratory system started its eight month journey to Mars where it will spend another two years researching the conditions for (past or future) life. The nuclear-powered Curiosity is much larger than any previous Mars Rover and five times heavier. Its equipment includes a drill on a 2.1-meter arm and a laser to vaporize rocks for easier onboard analysis.

When I first watched this video this morning I was really amazed by the technology, the landing strategy and the terrific level of sophistication of the rover system. Then I thought to myself - if there is enough brainpower on earth to make this vision a reality, then it must be also possible to workout a solution for the global economy!

19:50 Posted in AI & robotics, Blue sky | Permalink | Comments (0)

Jul 27, 2011

A robot that flies like a bird

The poetry of technology..

00:07 Posted in AI & robotics, Blue sky | Permalink | Comments (0)

Oct 19, 2010

HRP-4C cybernetic human dance

Dance Robot LIVE! is a performance recently shown at the Digital Content Expo in Tokyo. The performance features AIST's feminine HRP-4C robot and four humans. The routine was produced by renowned dancer/choreographer SAM-san and the lip-synced song is a Vocaloid version of "Deatta Koro no Yō ni" by Kaori Mochida (Every Little Thing).

00:00 Posted in AI & robotics | Permalink | Comments (0) | Tags: robotics, hrp4c, dance

Oct 07, 2010

Raytheon shows off the XOS2 Exoskeleton robotic suit

03:04 Posted in AI & robotics | Permalink | Comments (0) | Tags: robotic suit