Dec 14, 2018

Transformative Experience Design

In the last couple of years, I and my team have been intensively working on a new research program in Positive Technology: Transformative Experience Design.

In short, the goal of this project is to understand how virtual reality, brain-based technologies and the language of arts can support transformative experiences, that is, emotional experiences that promote deep personal change.

About Transformative Experience Design

As noted by Miller and C’de Baca, there are experiences in life that are able to generate profound and long-lasting shifts in core beliefs and attitudes, including subjective self-transformation. These experiences have the capacity of changing not only what individuals know and value, but also how they see the world.

According to Mezirow’s Transformative Learning Theory, these experiences can be triggered by a “disorienting dilemma” usually related to a life crisis or major life transition (e.g., death, illness, separation, or divorce), which forces individuals to critically examine and eventually revise their core assumptions and beliefs. The outcome of a transformative experience is a significant and permanent change in the expectations – mindsets, perspectives and habits of mind – through which we filter and make sense of the world. For these characteristics, transformative experiences are gaining increasing attention not only in psychology and neuroscience, but also in philosophy.

From a psychological perspective, transformative change is often associated to specific experiential states, defined “self-transcendence experiences”. These are transient mental states that allow individuals experiencing something greater of themselves, reflecting on deeper dimensions of their existence and shaping lasting spiritual beliefs. These experiences encompass several mental states, including flow, positive emotions such as awe and elevation, “peak” experiences, “mystical” experiences and mindfulness (for a review, see Yaden et al.). Although the phenomenological profile of these experiential states can vary significantly in terms of quality and intensity, they are characterized by a diminished sense of self and increased feelings of connectedness to other people and one’s surroundings. Previous research has shown that self-transcendent experiences are important sources of positive psychological outcomes, including increased meaning in life, positive mood and life satisfaction, positive behavior change, spiritual development and pro-social attitudes.

One potentially interesting question related to self-transcendent experiences concerns whether, and to which extent, these mental states can be invited or elicited by means of interactive technologies. This question lies at the center of a new research program – Transformative Experience Design (TED) – which has a two-fold aims:

- to systematically investigate the phenomenological and neuro-cognitive aspects of self-transcendent experiences, as well as their implications for individual growth and psychological wellbeing; and

- to translate such knowledge into a tentative set of design principles for developing “e-experiences” that support meaning in life and personal growth.

The three pillars of TED: virtual reality, arts and neurotechnologies

I have identified three possible assets that can be combined to achieve this goal:

- The first strategy concerns the use of advanced simulation technologies, such as virtual, augmented and mixed reality, as the elective medium to generate controlled alteration of perceptual, motor and cognitive processes.

- The second asset regards the use of the language of arts to create emotionally-compelling storytelling scenarios.

- The third and final element of TED concerns the use of brain-based technologies, such as brain stimulation and bio/neurofeedback, to modulate neuro-physiological processes underlying self-transcendence mental states, using a closed-loop approach.

The central assumption of TED is that the combination of these means provides a broad spectrum of transformative possibilities, which include, for example, “what it is like” to embody another self or another life form, simulating peculiar neurological phenomena like synesthesia or out-of-body experiences, and altering time and space perception.

The safe and controlled use of these e-experiences hold the potential to facilitate self-knowledge and self-understanding, foster creative expression, develop new skills, and recognize and learn the value of others.

Example of TED research projects

Although TED is a recent research program, we are building a fast-growing community of researchers, artists and developers to shape the next generation of transformative experiences. Here is a list of recent projects and publications related to TED in different application contexts.

The Emotional Labyrinth

In this project I teamed with Sergi Bermudez i Badia and Mónica S. Cameirão from Madera Interactive Technologies Institute to realize the first example of emotionally-adaptive virtual reality application for mental health. So far, virtual reality applications in wellbeing and therapy have typically been based on pre-designed objects and spaces. In this project, we suggest a different approach, in which the content of a virtual world is procedurally generated at runtime (that is, through algorithmic means) according to the user’s affective responses. To demonstrate the concept, we developed a first prototype using Unity: the “Emotional Labyrinth”. In this VR experience, the user walks through a endless maze, whose structure and contents are automatically generated according to four basic emotional states: joy, sadness, anger and fear.

During navigation, affective states are dynamically represented through pictures, music, and animated visual metaphors chosen to represent and induce emotional states.

The underlying hypothesis is that exposing users to multimodal representations of their affective states can create a feedback loop that supports emotional self-awareness and fosters more effective emotional regulation strategies. We carried out a first study to (i) assess the effectiveness of the selected metaphors in inducing target emotions, and (ii) identify relevant psycho-physiological markers of the emotional experience generated by the labyrinth. Results showed that the Emotional Labyrinth is overall a pleasant experience in which the proposed procedural content generation can induce distinctive psycho-physiological patterns, generally coherent with the meaning of the metaphors used in the labyrinth design. Further, collected psycho-physiological responses such as electrocardiography, respiration, electrodermal activity, and electromyography are used to generate computational models of users' reported experience. These models enable the future implementation of the closed loop mechanism to adapt the Labyrinth procedurally to the users' affective state.

Awe in Virtual Reality

Awe is a compelling emotional experience with philosophical roots in the domain of aesthetics and religious or spiritual experiences. Both Edmund Burke’s (1759/1970 and Immanuel Kant’s (1764/2007) analyses of the sublime as a compelling experience that transcends one’s perception of beauty to something more profound are couched in terms that seem synonymous with the modern understanding of awe.

The contemporary psychological understanding of awe comes largely from a foundational article written by Keltner and Haidt (2003). According to their conceptualization, awe experiences encompass two key appraisals: the perception of vastness and the need to mentally attempt to accommodate this vastness into existing mental schemas.

Crucially, research has shown that experiencing awe is associated with positive transformative changes at both psychological and physical levels (e.g., Shiota et al., 2007; Schneider, 2009; Stellar et al., 2015). For example, awe can change our perspective toward even unknown others thus increasing our generous attitude toward them (Piff et al., 2015; Prade and Saroglou, 2016) and reducing aggressive behaviors (Yang et al., 2016). Generally, awe broadens our attentional focus (Sung and Yih, 2015), and extends time perception (Rudd et al., 2012). Furthermore, this emotion protects our immunity system against chronic and cardiovascular diseases and enhance our satisfaction toward life (Krause and Hayward, 2015; Stellar et al., 2015).

Considering the transformative potential of awe, I and my doctoral student Alice Chiricofocused on how to elicit intense feelings of this complex emotion using virtual reality. To this end, we modeled three immersive virtual environments (i.e., a forest including tall trees; a chain of mountains; and an earth view from deep space) designed to induce a feeling of perceptual vastness. As hypothesized, the three target environments induced a significantly greater awe than a "neutral" virtual environment (a park consisting of a green clearing with very few trees and some flowers). Full details of this study are reported here.

In another study, we examined the potential of VR-induced awe to foster creativity. To this end, we exposed participants both to an awe-inducing 3D-video and to a neutral one in a within-subject design. After each stimulation condition, participants reported the intensity and type of perceived emotion and completed two verbal tasks of the Torrance Tests of Creative Thinking (TTCT; Torrance, 1974), a standardized test to measure creativity performance. Results showed that awe positively affected key creativity components—fluidity, flexibility, and elaboration measured by the TTCT subtest—compared to a neutral stimulus, suggesting that (i) awe has a potential for boosting creativity, and (ii) VR is a powerful awe-inducing medium that can be used in different application contexts (i.e., educational, clinical etc.) where this emotion can make a difference.

However, not only graphical 3D environments can be used to induce awe; in another study, we showed that also 360° videos depicting vast natural scenarios are powerful stimuli to induce intense feelings of this complex emotion.

Immersive storytelling for psychotherapy and mental wellbeing

Growing research evidence indicates that VR can be effectively integrated in psychotherapyto treat a number of clinical conditions, including anxiety disorders, pain disorders and PTSD. In this context, VR is mostly used as simulative tool for controlled exposure to critical/fearful situations. The possibility of presenting realistic controlled stimuli and, simultaneously, of monitoring the responses generated by the user offers a considerable advantage over real experiences.

However, the most interesting potential of VR resides in its capacity of creating compelling immersive storytelling experiences. As recently noted by Brenda Wiederhold:

Virtual training simulations, documentaries, and experiences will, however, only be as effective as the emotions they spark in the viewer. To reach that point, the VR industry is faced with two obstacles: creating content that is enjoyable and engaging, and encouraging adoption of the medium among consumers. Perhaps the key to both problems is the recognition that users are not passive consumers of VR content. Rather, they bring their own thoughts, needs, and emotions to the worlds they inhabit. Successful stories challenge those conceptions, invite users to engage with the material, and recognize the power of untethering users from their physical world and throwing them into another. That isn’t just the power of VR—it’s the power of storytelling as a whole.

Thus, VR-based narratives can be used to generate an infinite number of “possible selves”, by providing a person a “subjective window of presence” into unactualized, but possible, worlds.

The emergence of immersive storytelling introduces the possibility of using VR in mental health from a different rationale than virtual reality-based exposure therapy. In this novel rationale, immersive stories, lived from a first-person perspective, provide the patient the opportunity of engaging emotionally with metaphoric narratives, eliciting new insights and meaning-making related to viewers’ personal world views.

To explore this new perspective, I have been collaborating with the Italian startup Become to test the potential of transformative immersive storytelling in mental health and wellbeing. An intriguing aspect of this strategy is that, in contrast with conventional virtual-reality exposure therapy, which is mostly used in combination with Cognitive-Behavioral Therapy interventions, immersive storytelling scenarios can be integrated in any therapeutic model, since all kinds of psychotherapy involve some form of ‘storytelling’.

In this project, we are interested in understanding, for example, whether the integration of immersive stories in the therapeutic setting can enhance the efficacy of the intervention and facilitate patients in expressing their inner thoughts, feelings, and life experiences.

Collaborate!

Are you a researcher, a developer, or an artist interested in collaborating in TED projects? Here is how:

- Drop me an email at: andrea.gaggioli@unicatt.it

- Sign into ResearchGate and visit Transformative Experience Design project's page

- Have a look at the existing projects and publications to find out which TED research line is more interesting to you.

Key references

[1] Miller, W. R., & C'de Baca, J. (2001). Quantum change: When epiphanies and sudden insights transform ordinary lives. New York: Guilford Press.

[2] Yaden, D. B., Haidt, J., Hood, R. W., Jr., Vago, D. R., & Newberg, A. B. (2017). The varieties of self-transcendent experience. Review of General Psychology, 21(2), 143-160.

[3] Gaggioli, A. (2016). Transformative Experience Design. In Human Computer Confluence. Transforming Human Experience Through Symbiotic Technologies, eds A. Gaggioli, A. Ferscha, G. Riva, S. Dunne, and I. Viaud-Delmon (Berlin: De Gruyter Open), 96–121.

Jan 02, 2017

Why we should fix inequalities in science

Science is a source of progress and the best hope for the future of mankind. With a world population reaching seven billion individuals and a growing consumption of (increasingly scarce) natural resources, the only chance that we have to avoid the collapse of civilization caused by our own expansion is to find new strategies for sustainable development. But addressing this challenge will be impossible without the support of scientific and technological innovation.

Thanks to scientific research, we have conquered space, developed therapies for devastating pathologies, and explored the mysteries of matter. Science is illuminating our understanding of the most complex object in nature—the brain—and expanding our knowledge of the universe. But today, science is suffering from several diseases.

In most countries, researchers strive to find the economic resources to carry out their research and keep their jobs. Since research funding is scarce, scientists are forced to compete with peers in order to obtain them. The odds of winning this hard competition, however, are increasingly more dependent upon the scientific impact and productivity of grant seekers than they are on the excellence of the research proposals. As a consequence, researchers who are not able to produce a decent number of publications on sufficiently prestigious outlets have almost no chance of receiving funding and realizing their ideas. This is why the notorious motto, publish or perish, has become the #1 concern of most researchers in the world.

The pressure to publish has several negative implications. First, it pushes conflicts of interest and risks of scientific misconduct, for example falsification or fabrication of data. Furthermore, the spasmodic need to increase one’s h-index (a way to measure academic impact) leads researchers (and especially younger scholars) to focus on topics that are currently more mainstream or fashionable, and thus more likely to attract a greater number of citations from other authors. And last - but not least - while the rush to publish can generate more papers, it also increases the volume of poor scientific work. It could be argued that only a competitive system, such as the current one, can make it possible to select the best talents and ideas, thus ensuring the highest return on investment for society. But in reality, there is no evidence that the increase in scientific productivity is associated with better research outcomes.

Furthermore, as recently shown by University of Michigan sociologist Yu Xie, science is becoming more and more a ‘‘winner takes all’’ field, in which a few talented scientists receive much greater recognition and rewards than lesser-known scientists for comparable contributions. As a consequence, many young researchers, although brilliant, have little chance of being recognized at all because most of the available resources are taken by the ‘‘giants’’ of their scientific disciplines. But in addition to diminishing integrity, lowering scientific quality, and spreading frustration among younger scholars, the current system may also threaten the very driving forces behind science: the passion to invent and discover. As noted by Teresa Amabile and Steven Kramer, two prominent experts of innovation, ‘‘what doesn’t motivate creativity can kill it.’’

22:16 Posted in Blue sky | Permalink | Comments (0)

Jun 21, 2016

New book on Human Computer Confluence - FREE PDF!

Two good news for Positive Technology followers.

1) Our new book on Human Computer Confluence is out!

2) It can be downloaded for free here

Human-computer confluence refers to an invisible, implicit, embodied or even implanted interaction between humans and system components. New classes of user interfaces are emerging that make use of several sensors and are able to adapt their physical properties to the current situational context of users.

A key aspect of human-computer confluence is its potential for transforming human experience in the sense of bending, breaking and blending the barriers between the real, the virtual and the augmented, to allow users to experience their body and their world in new ways. Research on Presence, Embodiment and Brain-Computer Interface is already exploring these boundaries and asking questions such as: Can we seamlessly move between the virtual and the real? Can we assimilate fundamentally new senses through confluence?

The aim of this book is to explore the boundaries and intersections of the multidisciplinary field of HCC and discuss its potential applications in different domains, including healthcare, education, training and even arts.

DOWNLOAD THE FULL BOOK HERE AS OPEN ACCESS

Please cite as follows:

Andrea Gaggioli, Alois Ferscha, Giuseppe Riva, Stephen Dunne, Isabell Viaud-Delmon (2016). Human computer confluence: transforming human experience through symbiotic technologies. Warsaw: De Gruyter. ISBN 9783110471120.

09:53 Posted in AI & robotics, Augmented/mixed reality, Biofeedback & neurofeedback, Blue sky, Brain training & cognitive enhancement, Brain-computer interface, Cognitive Informatics, Cyberart, Cybertherapy, Emotional computing, Enactive interfaces, Future interfaces, ICT and complexity, Neurotechnology & neuroinformatics, Positive Technology events, Research tools, Self-Tracking, Serious games, Technology & spirituality, Telepresence & virtual presence, Virtual worlds, Wearable & mobile | Permalink

May 26, 2016

From User Experience (UX) to Transformative User Experience (T-UX)

In 1999, Joseph Pine and James Gilmore wrote a seminal book titled “The Experience Economy” (Harvard Business School Press, Boston, MA) that theorized the shift from a service-based economy to an experience-based economy.

According to these authors, in the new experience economy the goal of the purchase is no longer to own a product (be it a good or service), but to use it in order to enjoy a compelling experience. An experience, thus, is a whole-new type of offer: in contrast to commodities, goods and services, it is designed to be as personal and memorable as possible. Just as in a theatrical representation, companies stage meaningful events to engage customers in a memorable and personal way, by offering activities that provide engaging and rewarding experiences.

Indeed, if one looks back at the past ten years, the concept of experience has become more central to several fields, including tourism, architecture, and – perhaps more relevant for this column – to human-computer interaction, with the rise of “User Experience” (UX).

The concept of UX was introduced by Donald Norman in a 1995 article published on the CHI proceedings (D. Norman, J. Miller, A. Henderson: What You See, Some of What's in the Future, And How We Go About Doing It: HI at Apple Computer. Proceedings of CHI 1995, Denver, Colorado, USA). Norman argued that focusing exclusively on usability attribute (i.e. easy of use, efficacy, effectiveness) when designing an interactive product is not enough; one should take into account the whole experience of the user with the system, including users’ emotional and contextual needs. Since then, the UX concept has assumed an increasing importance in HCI. As McCarthy and Wright emphasized in their book “Technology as Experience” (MIT Press, 2004):

“In order to do justice to the wide range of influences that technology has in our lives, we should try to interpret the relationship between people and technology in terms of the felt life and the felt or emotional quality of action and interaction.” (p. 12).

However, according to Pine and Gilmore experience may not be the last step of what they call as “Progression of Economic Value”. They speculated further into the future, by identifying the “Transformation Economy” as the likely next phase. In their view, while experiences are essentially memorable events which stimulate the sensorial and emotional levels, transformations go much further in that they are the result of a series of experiences staged by companies to guide customers learning, taking action and eventually achieving their aspirations and goals.

In Pine and Gilmore terms, an aspirant is the individual who seeks advice for personal change (i.e. a better figure, a new career, and so forth), while the provider of this change (a dietist, a university) is an elictor. The elictor guide the aspirant through a series of experiences which are designed with certain purpose and goals. According to Pine and Gilmore, the main difference between an experience and a transformation is that the latter occurs when an experience is customized:

“When you customize an experience to make it just right for an individual - providing exactly what he needs right now - you cannot help changing that individual. When you customize an experience, you automatically turn it into a transformation, which companies create on top of experiences (recall that phrase: “a life-transforming experience”), just as they create experiences on top of services and so forth” (p. 244).

A further key difference between experiences and transformations concerns their effects: because an experience is inherently personal, no two people can have the same one. Likewise, no individual can undergo the same transformation twice: the second time it’s attempted, the individual would no longer be the same person (p. 254-255).

But what will be the impact of this upcoming, “transformation economy” on how people relate with technology? If in the experience economy the buzzword is “User Experience”, in the next stage the new buzzword might be “User Transformation”.

Indeed, we can see some initial signs of this shift. For example, FitBit and similar self-tracking gadgets are starting to offer personalized advices to foster enduring changes in users’ lifestyle; another example is from the fields of ambient intelligence and domotics, where there is an increasing focus towards designing systems that are able to learn from the user’s behaviour (i.e. by tracking the movement of an elderly in his home) to provide context-aware adaptive services (i.e. sending an alert when the user is at risk of falling).

But likely, the most important ICT step towards the transformation economy could take place with the introduction of next-generation immersive virtual reality systems. Since these new systems are based on mobile devices (an example is the recent partnership between Oculus and Samsung), they are able to deliver VR experiences that incorporate information on the external/internal context of the user (i.e. time, location, temperature, mood etc) by using the sensors incapsulated in the mobile phone.

By personalizing the immersive experience with context-based information, it might be possibile to induce higher levels of involvement and presence in the virtual environment. In case of cyber-therapeutic applications, this could translate into the development of more effective, transformative virtual healing experiences.

Furthermore, the emergence of "symbiotic technologies", such as neuroprosthetic devices and neuro-biofeedback, is enabling a direct connection between the computer and the brain. Increasingly, these neural interfaces are moving from the biomedical domain to become consumer products. But unlike existing digital experiential products, symbiotic technologies have the potential to transform more radically basic human experiences.

Brain-computer interfaces, immersive virtual reality and augmented reality and their various combinations will allow users to create “personalized alterations” of experience. Just as nowadays we can download and install a number of “plug-ins”, i.e. apps to personalize our experience with hardware and software products, so very soon we may download and install new “extensions of the self”, or “experiential plug-ins” which will provide us with a number of options for altering/replacing/simulating our sensorial, emotional and cognitive processes.

Such mediated recombinations of human experience will result from of the application of existing neuro-technologies in completely new domains. Although virtual reality and brain-computer interface were originally developed for applications in specific domains (i.e. military simulations, neurorehabilitation, etc), today the use of these technologies has been extended to other fields of application, ranging from entertainment to education.

In the field of biology, Stephen Jay Gould and Elizabeth Vrba (Paleobiology, 8, 4-15, 1982) have defined “exaptation” the process in which a feature acquires a function that was not acquired through natural selection. Likewise, the exaptation of neurotechnologies to the digital consumer market may lead to the rise of a novel “neuro-experience economy”, in which technology-mediated transformation of experience is the main product.

Just as a Genetically-Modified Organism (GMO) is an organism whose genetic material is altered using genetic-engineering techniques, so we could define aTechnologically-Modified Experience (ETM) a re-engineered experience resulting from the artificial manipulation of neurobiological bases of sensorial, affective, and cognitive processes.

Clearly, the emergence of the transformative neuro-experience economy will not happen in weeks or months but rather in years. It will take some time before people will find brain-computer devices on the shelves of electronic stores: most of these tools are still in the pre-commercial phase at best, and some are found only in laboratories.

Nevertheless, the mere possibility that such scenario will sooner or later come to pass, raises important questions that should be addressed before symbiotic technologies will enter our lives: does technological alteration of human experience threaten the autonomy of individuals, or the authenticity of their lives? How can we help individuals decide which transformations are good or bad for them?

Answering these important issues will require the collaboration of many disciplines, including philosophy, computer ethics and, of course, cyberpsychology.

May 24, 2016

Virtual reality painting tool

12:07 Posted in Blue sky, Future interfaces, Virtual worlds | Permalink | Comments (0)

Computational Personality?

The field of artificial intelligence (AI) has undergone a dramatic evolution in the last years. The impressive advances in this field have inspired several leaders in the scientific and technological community - including Stephen Hawking and Elon Musk - to raise concerns about a potential domination of machines over humans.

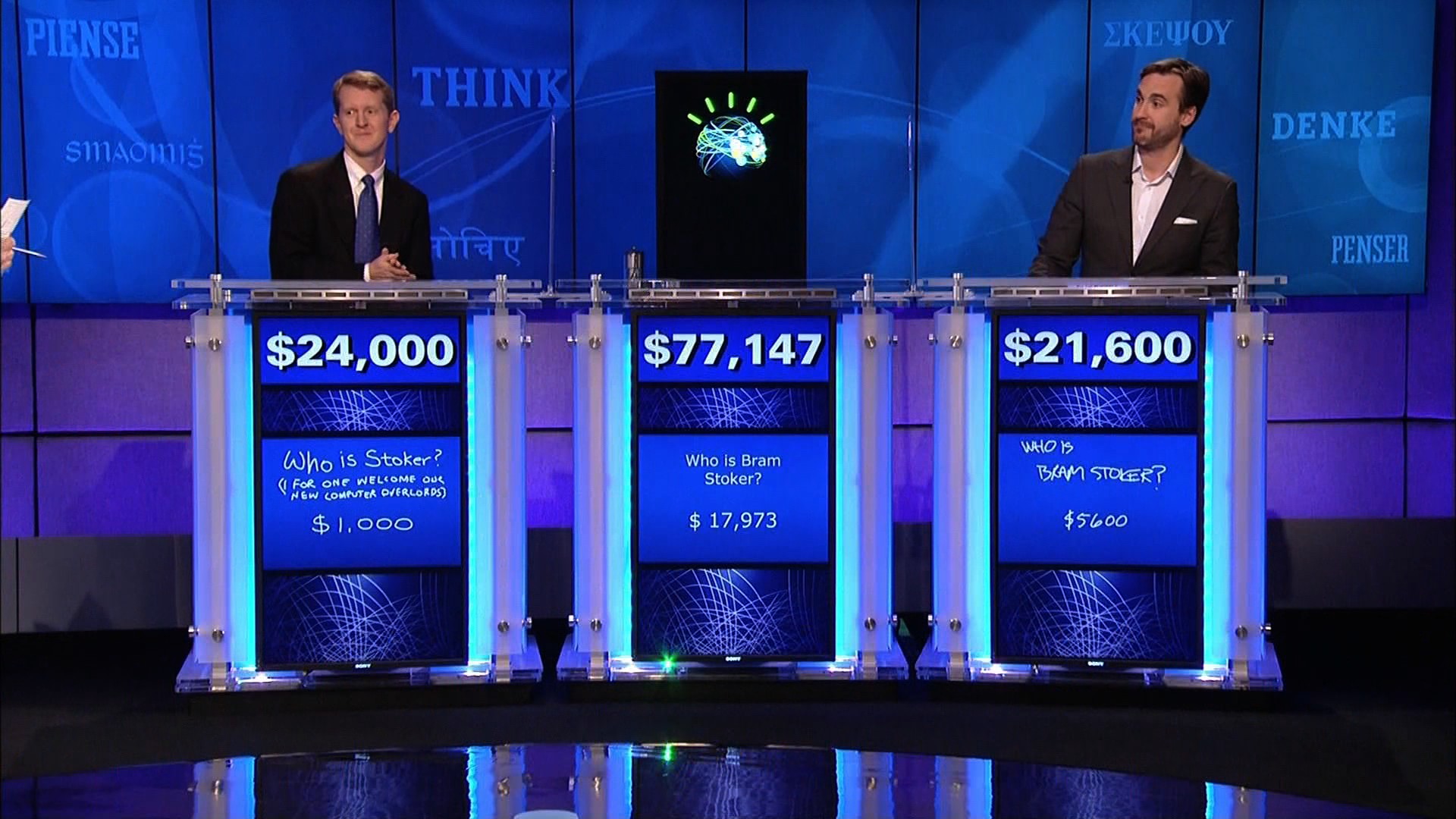

While many people still think about AI as robots with human-like characteristics, this field is much broader and include a number of diverse tools and applications, from SIRI to self-driving cars, to autonomous weapons. Among the key innovations in the AI field, IBM’s Watson computer system is certainly one of the most popular.

Developed within IBM’s DeepQA project lead by principal investigator David Ferrucci, Watson allows answering questions addressed in natural language, but also features advanced cognitive abilities such as information retrieval, knowledge representation, automatic reasoning, and “open domain question answering”.

Thanks to these advanced functions, Watson could compete at the human champion level in real time on the American TV quiz show, Jeopardy. This impressive result has opened several potential business applications of so-called “cognitive computing”, i.e. targeting big data analytics problems in health, pharma, and other business sectors. But psychology, too, may be one of the next frontier of the cognitive computing revolution.

For example, Watson Personality Insight is a service designed to automatically-generate psychological profiles on the basis of unstructured text extracted from mails, tweets, blog posts, articles and forums. In addition to a description of your personality, needs and values, the program provides an automated analysis of “Big Five” personality traits: openness, conscientiousness, extroversion, agreeableness, and neuroticism; all these data can then be visualized in a graphic representation. According to IBM’s documentation, to give a reliable estimate of personality, the Watson program requires at least 3,500 words, but preferably 6,000 words. Furthermore, the content of the text should ideally reflects personal experiences, thoughts and responses. The psychological model behind the service is based on studies showing that frequency with which we use certain categories of words can provide clues to personality, thinking style, social connections, and emotional stress variations.

Clearly, many psychologists (and non-psychologists, too) may have several doubts about the reliability and accuracy of this service. Furthermore, for some people, collecting social media data to identify psychological traits may lead to Orwellian scenarios. Although these concerns are understandable, they may be mitigated by the important positive applications and benefits that this technology may bring about for individuals, organizations and society.

11:44 Posted in AI & robotics, Big Data, Blue sky, Computational psychology | Permalink | Comments (0)

Incentivized competitions boost innovation

The last decade has witnessed a tremendous advance in technological innovations. This is also thanks to the growing diffusion of open innovation platforms, which have leveraged on the explosion of social network and digital media to promote a new culture of “bottom-up” discovery and invention.

An example of the potential of open innovation to revolutionize technology and science is provided by online crowdfunding sites for creative projects, such Kickstarter and Indiegogo. In the last few years, these online platforms have supported thousands of projects, including extremely innovative products such as the headset Oculus, which has contributed to the renaissance of Virtual Reality.

Incentivized competitions represent a further strategy for engaging the public and gathering innovative ideas on a global scale. This approach consists in identifying the most interesting challenges and inviting the community to solve them.

One of the first and most popular incentivized competitions is the Ansari X-Prize, celebrating this year its 10th anniversary. Funded by the Ansari family, the Ansari X-Prize challenged teams from around the world to build a reliable, reusable, privately financed, manned spaceship capable of carrying three people to 100 kilometers above the Earth's surface twice within two weeks. The prize was awarded in 2004 to Mojave Aereospace Ventures and since then, the award has contributed to create a new private space industry. Recently, X-Prize has introduced spin-off for-profit venture HeroX, a kind of “Kickstarter” of X-Prize-type competitions. The platform allows anyone to post their own competition.

Those who think they have the best solution can then submit their entries to win a cash prize. Another successful incentivized contest is Qualcomm Tricorder X Prize, offering a US$7 million grand prize, US$2 million second prize, and US$1 million third prize to the best among the finalists offering an automatic non-invasive health diagnostics packaged into a single portable device that weighs no more than 5 pounds (2.3 kg), able to diagnose over a dozen medical conditions, including whooping cough, hypertension, mononucleosis, shingles, melanoma, HIV, and osteoporosis.

Incentivized competitions have proven effective in supporting the solution to global issues and develop powerful new visions of the future that can potentially impact the lives of billions of people. The reason of such effectiveness is related to the “format” of these competitions.

Open idea contests include clear and well-defined objectives, which can be measured objectively in terms of performance/outcome, and a significant amount of financial resources to achieve those objectives. Further, incentive competitions target only “stretch goals”, very ambitious (and risky) objectives that require very innovative strategies and original methodologies in order to be addressed.

Incentive competitions are also very “democratic”, in the sense that they are not limited to academic teams or research organizations, but are open to the involvement of large and small companies, start-ups, governments and even single individuals.

11:16 Posted in Blue sky, Creativity and computers | Permalink | Comments (0)

Apr 27, 2016

Predictive Technologies: Can Smart Tools Augment the Brain's Predictive Abilities?

Feb 18, 2016

A S.E.T.I. for psychology

The recent debate about the issue of reproducibility in psychology lead me to question whether 21th century scientific psychology should still regard the “conventional” experimental approach as the prime method of inquiry.

Even most controlled lab experiments produce results that are difficult to generalize to real-life, because of the artificiality of the setting. On the other hand, field and natural experiments are higher in ecological validity (since they are carried out in real-world situations) but are hard to control and replicate. Not to mention that most psychology research is consistently done primarily on undergraduate students.

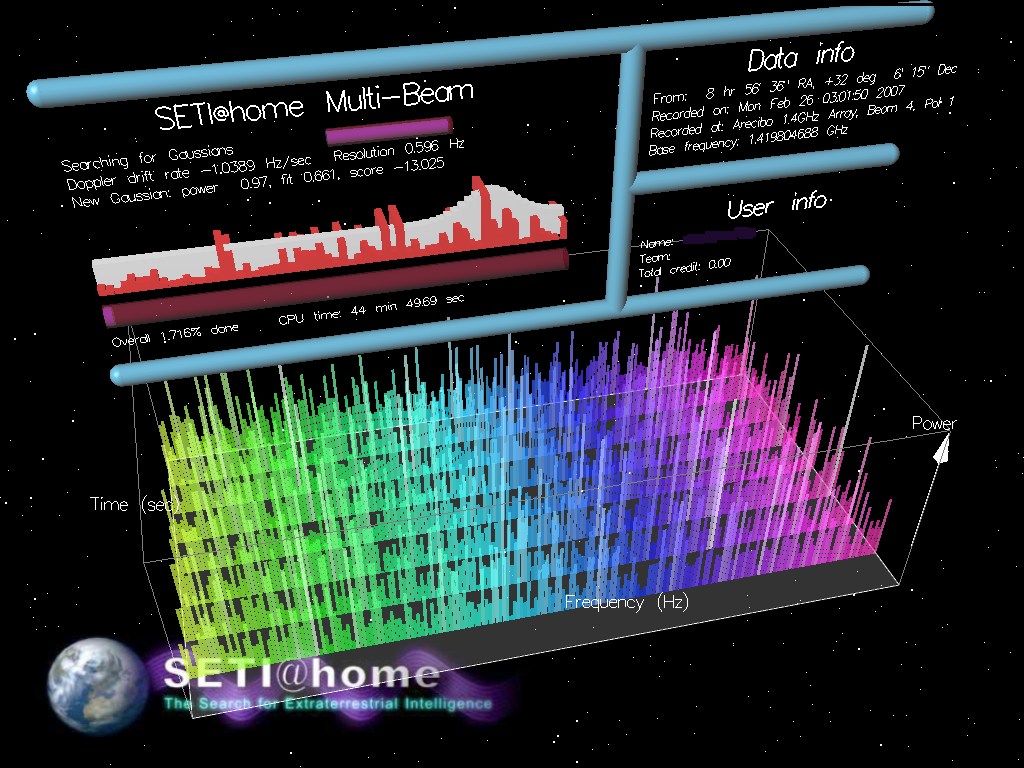

I suggest that the digital revolution and the emergence of “citizen science” could offer a totally new research approach to psychology.

Every second, social media, sensors and mobile tools generate massive amounts of data concerning people’s behavior and activities. According to a recent forecast by networking company Cisco concerning global mobile data traffic growth trends, global mobile data traffic reached 3.7 exabytes per month at the end of 2015, up from 2.1 exabytes per month at the end of 2014. Mobile data traffic has grown 4,000-fold over the past 10 years and almost 400-million-fold over the past 15 years. Mobile networks carried fewer than 10 gigabytes per month in 2000, and less than 1 petabyte per month in 2005. (One exabyte is equivalent to one billion gigabytes, and one thousand petabytes.)

The analysis of these large-scale “digital footprints” may open new avenues for discovery to psychologists, revealing patterns that would be otherwise impossible to detect through conventional experimental methods. A cloud-based open data portal could be designed to gather data streams made available from volunteering citizens, following protocols and guidelines developed by scientists – think about it as a sort of “S.E.T.I.” project for psychology. Autorized researchers may access these shared datasets to make collective analysis and interpretations.

I argue that the introduction of collective digital experiments may offer psychology novel opportunities to advance its research, and eventually achieve the rigour of natural sciences.

23:05 Posted in Blue sky | Permalink | Comments (0)

Nov 17, 2015

The new era of Computational Biomedicine

In recent years, the increasing convergence between nanotechnology, biomedicine and health informatics have generated massive amounts of data, which are changing the way healthcare research, development, and applications are done.

Clinical data integrate physiological data, enabling detailed descriptions of various healthy and diseased states, progression, and responses to therapies. Furthermore, mobile and home-based devices monitor vital signs and activities in real-time and communicate with personal health record services, personal computers, smartphones, caregivers, and health care professionals.

However, our ability to analyze and interpret multiple sources of data lags far behind today’s data generation and storage capacity. Consequently, mathematical and computational models are increasingly used to help interpret massive biomedical data produced by high-throughput genomics and proteomics projects. Advanced applications of computer models that enable the simulation of biological processes are used to generate hypotheses and plan experiments.

The emerging discipline of computational biomedicine is concerned with the application of computer-based techniques and particularly modelling and simulation to human health. Since almost ten years, this vision is at the core of an European-funded program called “Virtual Physiological Human”. The goal of this initiative is to develop next-generation computer technologies to integrate all information available for each patient, and generated computer models capable of predicting how the health of that patient will evolve under certain conditions.

In particular, this programme is expected, over the next decades, to transform the study and practice of healthcare, moving it towards the priorities known as ‘4P's’: predictive, preventative, personalized and participatory medicine. Future developments of computational biomedicine may provide the possibility of developing not just qualitative but truly quantitative analytical tools, that is, models, on the basis of the data available through the system just described. Information not available today (large cohort studies nowadays include thousands of individuals whereas here we are talking about millions of records) will be available for free. Large cohorts of data will be available for online consultation and download. Integrative and multi-scale models will benefit from the availability of this large amount of data by using parameter estimation in a statistically meaningful manner. At the same time distribution maps of important parameters will be generated and continuously updated. Through a certain mechanism, the user will be given the opportunity to express his interest on this or that model so to set up a consensus model selection process. Moreover, models should be open for consultation and annotation. Flexible and user friendly services have many potential positive outcomes. Some examples include simulation of case studies, tests, and validation of specific assumptions on the nature or related diseases, understanding the world-wide distribution of these parameters and disease patterns, ability to hypothesize intervention strategies in cases such as spreading of an infectious disease, and advanced risk modeling.

11:25 Posted in Blue sky, ICT and complexity, Physiological Computing, Positive Technology events | Permalink

Apr 05, 2015

Are you concerned about AI?

Recently, a growing number of opinion leaders have started to point out the potential risks associated to the rapid advancement of Artificial Intelligence. This shared concern has led an interdisciplinary group of scientists, technologists and entrepreneurs to sign an open letter (http://futureoflife.org/misc/open_letter/), drafted by the Future of Life Institute, which focuses on priorities to be considered as Artificial Intelligence develops as well as on the potential dangers posed by this paradigm.

The concern that machines may soon dominate humans, however, is not new: in the last thirty years, this topic has been widely represented in movies (i.e. Terminator, the Matrix), novels and various interactive arts. For example, australian-based performance artist Stelarc has incorporated themes of cyborgization and other human-machine interfaces in his work, by creating a number of installations that confront us with the question of where human ends and technology begins.

In his 2005 well-received book “The Singularity Is Near: When Humans Transcend Biology” (Viking Penguin: New York), inventor and futurist Ray Kurzweil argued that Artificial Intelligence is one of the interacting forces that, together with genetics, robotic and nanotechnology, may soon converge to overcome our biological limitations and usher in the beginning of the Singularity, during which Kurzweil predicts that human life will be irreversibly transformed. According to Kurzweil, will take place around 2045 and will probably represent the most extraordinary event in all of human history.

Ray Kurzweil’s vision of the future of intelligence is at the forefront of the transhumanist movement, which considers scientific and technological advances as a mean to augment human physical and cognitive abilities, with the final aim of improving and even extending life. According to transhumanists, however, the choice whether to benefit from such enhancement options should generally reside with the individual. The concept of transhumanism has been criticized, among others, by the influential american philosopher of technology, Don Ihde, who pointed out that no technology will ever be completely internalized, since any technological enhancement implies a compromise. Ihde has distinguished four different relations that humans can have with technological artifacts. In particular, in the “embodiment relation” a technology becomes (quasi)transparent, allowing a partial symbiosis of ourself and the technology. In wearing of eyeglasses, as Ihde examplifies, I do not look “at” them but “through” them at the world: they are already assimilated into my body schema, withdrawing from my perceiving.

According to Ihde, there is a doubled desire which arises from such embodiment relations: “It is the doubled desire that, on one side, is a wish for total transparency, total embodiment, for the technology to truly "become me."(...) But that is only one side of the desire. The other side is the desire to have the power, the transformation that the technology makes available. Only by using the technology is my bodily power enhanced and magnified by speed, through distance, or by any of the other ways in which technologies change my capacities. (…) The desire is, at best, contradictory. l want the transformation that the technology allows, but I want it in such a way that I am basically unaware of its presence. I want it in such a way that it becomes me. Such a desire both secretly rejects what technologies are and overlooks the transformational effects which are necessarily tied to human-technology relations. This lllusory desire belongs equally to pro- and anti-technology interpretations of technology.” (Ihde, D. (1990). Technology and the Lifeworld: From Garden to Earth. Bloomington: Indiana, p. 75).

Despite the different philosophical stances and assumptions on what our future relationship with technology will look like, there is little doubt that these questions will become more pressing and acute in the next years. In my personal view, technology should not be viewed as mean to replace human life, but as an instrument for improving it. As William S. Haney II suggests in his book “Cyberculture, Cyborgs and Science Fiction: Consciousness and the Posthuman” (Rodopi: Amsterdam, 2006), “each person must choose for him or herself between the technological extension of physical experience through mind, body and world on the one hand, and the natural powers of human consciousness on the other as a means to realize their ultimate vision.” (ix, Preface).

23:26 Posted in AI & robotics, Blue sky, ICT and complexity | Permalink | Comments (0)

Oct 12, 2014

New Material May Help Us To Breathe Underwater

Scientists in Denmark announced they have developed a substance that absorbs, stores and releases huge amounts of oxygen.

The substance is so effective that just a few grains are capable of storing enough oxygen for a single human breath while a bucket full of the new material could capture an entire room of O2.

With the new material there are hopes those requiring medical oxygen might soon be freed from carrying bulky tanks, while SCUBA divers might also be able to use the material to absorb oxygen from water, allowing them to stay submerged for significantly longer.

The substance was developed by tinkering with the molecular structure of cobalt, a chemical element that when found in meteoric iron, resembles a silver-gray metal.

Read More: University of Southern Denmark

21:59 Posted in Blue sky, Research tools | Permalink | Comments (0)

Oct 06, 2014

Is the metaverse still alive?

In the last decade, online virtual worlds such as Second Life and alike have become enormously popular. Since their appearance on the technology landscape, many analysts regarded shared 3D virtual spaces as a disruptive innovation, which would have rendered the Web itself obsolete.

This high expectation attracted significant investments from large corporations such as IBM, which started building their virtual spaces and offices in the metaverse. Then, when it became clear that these promises would not be kept, disillusionment set in and virtual worlds started losing their edge. However, this is not a new phenomenon in high-tech, happening over and over again.

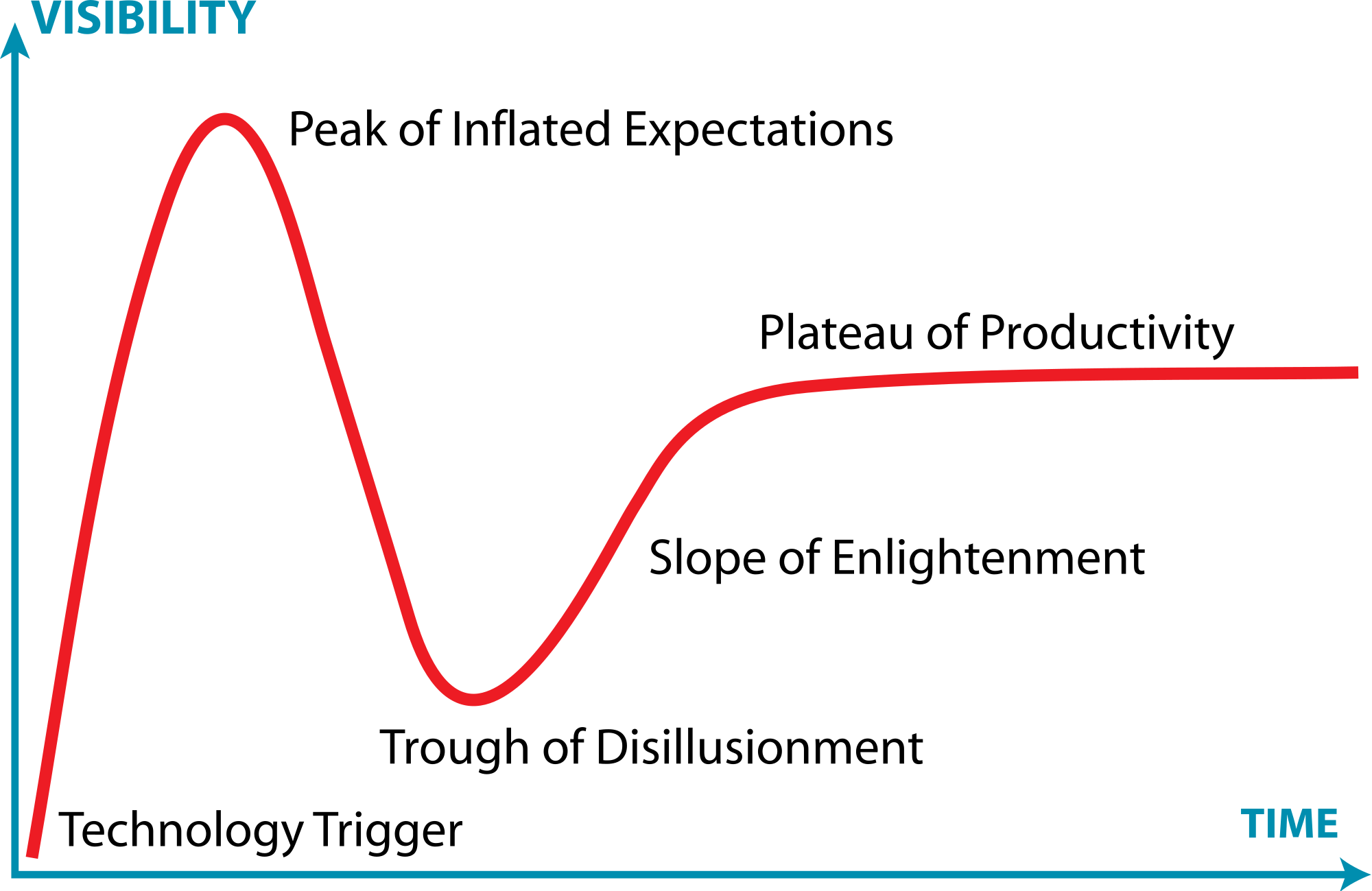

The US consulting company Gartner has developed a very popular model to describe this effect, called the “Hype Cycle”. The Hype Cycle provides a graphic representation of the maturity and adoption of technologies and applications.

It consists of five phases, which show how emerging technologies will evolve.

In the first, “technology trigger” phase, a new technology is launched which attracts the interest of media. This is followed by the “peak of inflated expectations”, characterized by a proliferation of positive articles and comments, which generate overexpectations among users and stakeholders.

In the next, “trough of disillusionment” phase, these exaggerated expectations are not fulfilled, resulting in a growing number of negative comments generally followed by a progressive indifference.

In the “slope of enlightenment” the technology potential for further applications becomes more broadly understood and an increasing number of companies start using it.

In the final, “plateau of productivity” stage, the emerging technology established itself as an effective tool and mainstream adoption takes off.

So what stage in the hype cycle are virtual worlds now?

After the 2006-2007 peak, metaverses entered the downward phase of the hype cycle, progressively loosing media interest, investments and users. Many high-tech analysts still consider this decline an irreversible process.

However, the negative outlook that headed shared virtual worlds into the trough of disillusionment maybe soon reversed. This is thanks to the new interest in virtual reality raised by the Oculus Rift (recently acquired by Facebook for $2 billion), Sony’s Project Morpheus and alike immersive displays, which are still at the takeoff stage in the hype cycle.

Oculus Rift's chief scientist Michael Abrash makes no mystery of the fact that his main ambition has always been to build a metaverse such the one described in Neal Stephenson's (1992) cyberpunk novel Snow Crash. As he writes on the Oculus blog

"Sometime in 1993 or 1994, I read Snow Crash and for the first time thought something like the Metaverse might be possible in my lifetime."

Furthermore, despite the negative comments and deluded expectations, the metaverse keeps attracting new users: in its 10th anniversary on June 23rd 2013, an infographic reported that Second Life had over 1 million users visit around the world monthly, more than 400,000 new accounts per month, and 36 million registered users.

So will Michael Abrash’s metaverse dream come true? Even if one looks into the crystal ball of the hype cycle, the answer is not easily found.

22:36 Posted in Blue sky, Serious games, Telepresence & virtual presence, Virtual worlds | Permalink | Comments (0)

Aug 03, 2014

Fly like a Birdly

Birdly is a full body, fully immersive, Virtual Reality flight simulator developed at the Zurich University of the Arts (ZHdK). With Birdly, you can embody an avian creature, the Red Kite, visualized through Oculus Rift, as it soars over the 3D virtual city of San Francisco, heightened by sonic, olfactory, and wind feedback.

21:38 Posted in Blue sky, Creativity and computers, Telepresence & virtual presence, Virtual worlds | Permalink | Comments (0)

Apr 06, 2014

Glass brain flythrough: beyond neurofeedback

Via Neurogadget

Researchers have developed a new way to explore the human brain in virtual reality. The system, called Glass Brain, which is developed by Philip Rosedale, creator of the famous game Second Life, and Adam Gazzaley, a neuroscientist at the University of California San Francisco, combines brain scanning, brain recording and virtual reality to allow a user to journey through a person’s brain in real-time.

Read the full story on Neurogadget

23:52 Posted in Biofeedback & neurofeedback, Blue sky, Information visualization, Physiological Computing, Virtual worlds | Permalink | Comments (0)

Feb 16, 2014

How much science is there?

The accelerating pace of scientific publishing and the rise of open access, as depicted by xkcd.com cartoonist Randall Munroe.

14:31 Posted in Blue sky, Information visualization | Permalink | Comments (0)

Feb 09, 2014

Nick Bostrom: The intelligence explosion hypothesis

Via IEET

Philosopher Nick Bostrom is a Swedish at the University of Oxford known for his work on existential risk and the anthropic principle covered in books such as Global Catastrophic Risks, Anthropic Bias and Human Enhancement. He holds a PhD from the London School of Economics . He is currently the director of both The Future of Humanity Institute and the Programme on the Impacts of Future Technology as part of the Oxford Martin School at Oxford University.

22:31 Posted in Blue sky, Ethics of technology | Permalink | Comments (0)

Dec 22, 2013

Cubli

Researchers at the Institute for Dynamic Systems and Control, ETH Zurich, Switzerland developed a small cube (cm 15X15X15) that can jump up and balance on its corner. Reaction wheels mounted on three faces of the cube rotate at high angular velocities and then brake suddenly, causing the Cubli to jump up. Once the Cubli has almost reached the corner stand up position, controlled motor torques are applied to make it balance on its corner. In addition to balancing, the motor torques can also be used to achieve a controlled fall such that the Cubli can be commanded to fall in any arbitrary direction. Combining these three abilities - jumping up, balancing, and controlled falling - the Cubli is able to 'walk'.

20:59 Posted in Blue sky | Permalink | Comments (0)

Oct 31, 2013

Daniel Dennett – If Brains Are Computers, What Kind Of Computers Are They?

Source: Future of Humanity Institute

23:33 Posted in Blue sky | Permalink | Comments (0)

Jul 23, 2013

Augmented Reality - Projection Mapping

22:50 Posted in Augmented/mixed reality, Blue sky, Cyberart | Permalink | Comments (0)