Nov 25, 2007

Virtual Eve

Researchers from Massey University have created a virtual teacher called Eve, that can ask questions, give feedback, discuss solutions, and express emotions. To develop the software for this system the Massey team observed children and their interactions with teachers and captured them on thousands of images. From these images of facial expression, gestures and body movements they developed programs that would capture and recognise facial expression, body movement, and significant bio-signals such as heart rate and skin resistance.

(Massey University)

23:30 Posted in Emotional computing | Permalink | Comments (0) | Tags: virtual humans

Nov 04, 2007

Beat wartime empathy device

Via Pasta & Vinegar

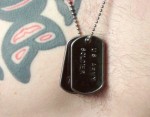

Designer Dominic Muren has created a device that allows a civilian to feel the heartbeat of a soldier:

I think we can all agree that war has become too impersonal. Media coverage emphasizes our distance, and most decision makers in congress don't have children who fight. Beat connects you very directly to a single soldier by thumping their recorded heartbeat against your chest. If they are calm, or worried, or under stress, you feel it. If they die, the heartbeat you feel dies too. If we are going to continue to fight wars, we need better methods of feedback like this one so the costs are more visceral and real for us. Imagine if all politicians were required to wear one of these!!

19:25 Posted in Emotional computing | Permalink | Comments (0) | Tags: affective computing

Oct 08, 2007

Mind-reading computers respond to users' moods

Researchers at Tufts University are developing a system that allows to monitor user experiences while working. The system is based on functional near-infrared spectroscopy (fNIRS) technology that uses light to monitor brain blood flow as a proxy for workload stress a user may experience when performing an increasingly difficult task to respond to users' thoughts of frustration or boredom.

New evaluation techniques that monitor user experiences while working with computers are increasingly necessary," said Robert Jacob, computer science professor and researcher. "One moment a user may be bored, and the next moment, the same user may be overwhelmed. Measuring mental workload, frustration and distraction is typically limited to qualitatively observing computer users or to administering surveys after completion of a task, potentially missing valuable insight into the users' changing experiences."

00:22 Posted in Emotional computing | Permalink | Comments (0) | Tags: stress

Sep 05, 2007

Affective diary

Via InfoAesthetics

from the project website:

The affective diary assembles sensor data, captured from the user and uploaded via their mobile phone, to form an ambiguous, abstract colourful body shape. With a range of other materials from the mobile phone, such as text and MMS messages, photographs, etc., these shapes are made available to the user. Combining these materials, the diary is designed to invite reflection and to allow the user to piece together their own stories.

18:33 Posted in Emotional computing, Information visualization | Permalink | Comments (0) | Tags: information visualization, emotional computing

Jul 26, 2007

Visual relaxation landscapes

This website shows beatiful animated landscape loops designed for visual relaxation (Flash plugin required)

19:11 Posted in Emotional computing | Permalink | Comments (0) | Tags: information visualization, emotional computing

Dec 20, 2006

ESP: Emotional Social Intelligence Prosthesis

Technology does not naturally sense nonverbal cues such as facial expressions and tone of voice, and does not easily acquire common sense knowledge about people. These "mindreading" functions also do not come naturally for some people, such as those diagnosed with autism. ESP is an affective wearable system that explores ways to augment and enhance the wearer's emotional-social intelligence. ESP's computational model of mind-reading infers in real time affective-cognitive mental states from nonverbal cues such as head and facial displays of people, and communicates these inferences to the wearer via visual, sound, and tactile feedback.

Our work leverages the advances in affect sensing and perception to (1) develop technologies that are sensitive to people's affective-cognitive states; (2) advance autism research and (3) create new technologies that enhance the social-emotional intelligence of people diagnosed with autism, as well as those who are not.

The project addresses open research challenges pertaining to whether machines can augment social interactions in a way that improves human to human communication. A longer term aim is to use the prosthesis as an assistive and therapeutic device for people with Autism Spectrum Disorder.

00:27 Posted in Emotional computing | Permalink | Comments (0) | Tags: emotional computing

Dec 18, 2006

Public mood ring

Re-blogged from infoaesthetics

Public mood ring is a physical installation inspired by the idea of a ring that translates the bearer's emotional condition into a changeable color hue...

link to the original post

18:05 Posted in Brain-computer interface, Emotional computing | Permalink | Comments (0)

Nov 29, 2006

Moodjam mood visualization

Via Infoaesthetic

Moodjam is an online visualization of people's moods visualized as beautiful color strips. Users can keep a record of their moods every hour, day, and weeks and share them with friends, family or co-workers.

22:19 Posted in Emotional computing, Information visualization | Permalink | Comments (0) | Tags: information visualization, emotional computing

Nov 07, 2006

SHOJI: Symbiotic Hosting Online Jog Instrument

From Pink Tentacle

Symbiotic Hosting Online Jog Instrument (SHOJI) is a system that monitors the feelings and behavior of the people in the room and relays the mood data to remote terminals where it is displayed as full-colored LED light.

In addition to constantly measuring the room’s environmental conditions, SHOJI terminals can detect the presence and movement of people, body temperature, and the nature of the activity in the room.

Read the full post on Pink Tentacle

23:21 Posted in Emotional computing | Permalink | Comments (0) | Tags: emotional computing

Oct 01, 2006

Color of My Sound

Color of My Sound is an Internet-based application that allows to assign colors to specific sounds. The project is inspired by the phenomenon of synesthesia, the mixing of the senses.

In CMS, users choose a sound category. Then, after listening, they can choose the color to which they are most strongly drawn. finally, they can see how others voted for that particular sound.

The Color of My Sound's original prototype has recently won a Silver Summit Creative Award, and is up for a 2006 Webby, in the NetArt category.

see also music animation machine & wolfram tones.

14:30 Posted in Emotional computing | Permalink | Comments (0) | Tags: emotional computing

Sep 30, 2006

Perimeters, Boundaries, and Borders

|

Artists, architects, designers, and other practitioners are constantly fashioning new forms and challenging disciplinary boundaries as they employ techniques such as rapid prototyping and generative processes. In the exhibition Perimeters, Boundaries, and Borders, at Lancaster, UK's Citylab, organizers Fast-uk and Folly explore the range of objects, buildings, and products being conceptualized with the aid of digital technologies. Aoife Ludlow's 'Remember to Forget?' is a series of jewelry designs that envisioned accessories incorporating RFID tags that allow the wearer to record information and emotions associated with those special items that we put on daily. Tavs Jorgensen uses a data glove in his 'Motion in Form' project. After gesturing around an object, data collected by the glove is given physical shape using CNC (Computer Numerical Control) milling, creating representations of the movements in materials such as glass or ceramics. Addressing traces of a different sort is Cylcone.soc, a data mapping piece by Gavin Bailey and Tom Corby. These works and many more examples from the frontiers of art and design are on view until October 21st." Rhizome News. |

19:39 Posted in Emotional computing, Pervasive computing | Permalink | Comments (0) | Tags: ambient intelligence, emotional computing, pervasive technology, persuasive technology

Beyond emoticons

Anthony Boucouvalas and colleagues at Bournemouth University in the UK have created a system that contorts an image of a user's face to express different emotions, New Scientist reports. According to Boucouvalas and coll., the system might be used to enrich text-based internet chat.

From the article:

A user first uploads a picture of their face with a "neutral" expression. Then they use their mouse to mark the ends of their eyebrows, the corners of their mouth and the edges of their eyes and lips. The software uses these points to morph the face to express different emotions: happiness, sadness, fear, anger, surprise, and disgust. A user can select an emotion and one of three intensity levels when using the system.

Read the full story here

See also Illustrations from Smiley Arena

19:05 Posted in Emotional computing | Permalink | Comments (0) | Tags: affective computing

Sep 21, 2006

E-garments

Via WMMNA

Philips Design has prototyped two garments that demonstrate how electronics can be incorporated into fabrics and clothes to express the emotions and personality of the wearer.

Bubelle, the "blushing dress" comprises two layers, the inner one is equipped with sensors that respond to changes in the wearer's emotions and projects them onto the outer textile. It behaves differently depending on who is wearing it. The other prototype is Frison, a body suit that reacts to being blown on by igniting a private constellation of tiny LEDs. Both measure skin signals and change light emission through biometric sensing technology.

Design Probe is an in-house far-future research program that considers what lifestyles might be like in 2020.The SKIN probe project is part of the program and challenges the notion that our lives are automatically better because they are more digital. It looks at more 'analog' phenomena like emotional sensing, exploring technologies that are 'sensitive' rather than 'intelligent'. Two outfits have been developed as part of SKIN to identify a new way of communicating with those around us by using garments as proxies to convey deep feelings that are difficult to express in words.

The Bubelle - Blush Dress comprises two layers, the inner layer of which is equipped with sensors that respond to changes in the wearer's emotions and projects them onto the outer textile. The body suit Frisson has LEDs that illuminate according to the wearer's state of excitement. Both measure skin signals and change light emission through biometric sensing technology.

Download images here

Read the full press release

10:35 Posted in Emotional computing, Wearable & mobile | Permalink | Comments (0) | Tags: emotional computing, wearable

Sep 18, 2006

Robots for ageing society

The CIRT consortium, composed by Tokyo University and a group of 7 companies (Toyota, Olympus, Sega, Toppan Printing, Fujitsu, Matsushita, and Mitsubishi), has started a project to develop robotic assistants for Japan’s aging population.

The robots envisioned by the project should support the elderly with housework and serve as personal transportation capable of replacing the automobile.

The Ministry of Education, Culture, Sports, Science and Technology (MEXT) will be the major sponsor of the research, whose total cost is expected to be about 1 billion yen (US$9 million) per year.

14:20 Posted in AI & robotics, Emotional computing | Permalink | Comments (0) | Tags: robotics, artificial intelligence

Aug 05, 2006

e-CIRCUS

The project e-Circus (Education through Characters with emotional Intelligence and Role-playing Capabilities that Understand Social interaction) aims to develop synthetic characters that interact with pupils in a virtual school, to support social and emotional learning in the real classroom. This will be achieved through virtual role-play with synthetic characters that establish credible and empathic relations with the learners.

The project consortium, which is funded under the EU 6th Framework Program, includes researchers from computer science, education and psychology from the UK, Portugal, Italy and Germany. Teachers and pupils will be included in the development of the software as well as a framework for using it in the classroom context. The e-Circus software will be tested in schools in the UK and Germany in 2007, evaluating not only the acceptance of the application among teachers and pupils but also whether the approach, as an innovative part of the curriculum, actually helps to reduce bullying in schools.

11:48 Posted in AI & robotics, Emotional computing | Permalink | Comments (0) | Tags: artificial intelligence

Aug 03, 2006

empathic painting

Via New Scientist

A team of computer scientists (Maria Shugrina and Margrit Betke from Boston University, US, and John Collomosse from Bath University, UK) have created a video display system (the "Empatic Painting") that tracks the expressions of onlookers and metamorphoses to match their emotions:

"For example, if the viewer looks angry it will apply a red hue and blurring. If, on the other hand, they have a cheerful expression, it will introduce increase the brightness and colour of the image." (New Scientist )

See a video of the empathic painting (3.4MG .avi, required codec).

20:50 Posted in Cyberart, Emotional computing | Permalink | Comments (0) | Tags: emotional computing

Aug 02, 2006

The Huggable

Via Siggraph2006 Emerging Technology website

The Huggable is a robotic pet developed by MIT researchers for therapy applications in children's hospitals and nursing homes, where pets are not always available. The robotic teddy has full-body sensate skin and smooth, quiet voice coil actuators that is able to relate to people through touch. Further features include "temperature, electric field, and force sensors which it uses to sense the interactions that people have with it. This information is then processed for its affective content, such as, for example, whether the Huggable is being petted, tickled, or patted; the bear then responds appropriately".

The Huggable has been unveiled at the Siggraph2006 conference in Boston. From the conference website:

Enhanced Life

Over the past few years, the Robotic Life Group at the MIT Media Lab has been developing "sensitive skin" and novel actuator technologies in addition to our artificial-intelligence research. The Huggable combines these technologies in a portable robotic platform that is specifically designed to leave the lab and move to healthcare applications.

Goals

The ultimate goal of this project is to evaluate the Huggable's usefulness as a therapy for those who have limited or no access to companion-animal therapy. In collaboration with nurses, doctors, and staff, the technology will soon be applied in pilot studies at hospitals and nursing homes. By combining Huggable's data-collection capabilities with its sensing and behavior, it may be possible to determine early onset of a person's behavior change or detect the onset of depression. The Huggable may also improve day-to-day life for those who may spend many hours in a nursing home alone staring out a window, and, like companion-animal therapy, it could increase their interaction with other people in the facility.

Innovations

The core technical innovation is the "sensitive skin" technology, which consists of temperature, electric-field, and force sensors all over the surface of the robot. Unlike other robotic applications where the sense of touch is concerned with manipulation or obstacle avoidance, the sense of touch in the Huggable is used to determine the affective content of the tactile interaction. The Huggable's algorithms can distinguish petting, tickling, scratching, slapping, and poking, among other types of tactile interactions. By combining the sense of touch with other sensors, the Huggable detects where a person is in relation to itself and responds with relational touch behaviors such as nuzzling.

Most robotic companions use geared DC motors, which are noisy and easily damaged. The Huggable uses custom voice-coil actuators, which provide soft, quiet, and smooth motion. Most importantly, if the Huggable encounters a person when it tries to move, there is no risk of injury to the person.

Another core technical innovation is the Huggable' combination of 802.11g networking with a robotic companion. This allows the Huggable to be much more than a fun, interactive robot. It can send live video and data about the person's interactions to the nursing staff. In this mode, the Huggable functions as a team member working with the nursing home or hospital staff and the patient or resident to promote the Huggable owner's overall health.

Vision

As poorly staffed nursing homes and hospitals become larger and more overcrowded, new methods must be invented to improve the daily lives of patients or residents. The Huggable is one of these technological innovations. Its ability to gather information and share it with the nursing staff can detect problems and report emergencies. The information can also be stored for later analysis by, for example, researchers who are studying pet therapy.

12:05 Posted in AI & robotics, Emotional computing, Serious games | Permalink | Comments (0) | Tags: cybertherapy, robotics, artificial intelligence

Jul 27, 2006

Mouthpiece

Re-blogged from WMMNA

The Mouthpiece has been designed to help strangers who find it difficult to express their feelings or opinions face to face. A small LCD monitor and loudspeakers are covering the mouth of the wearer like a gag. The equipment replaces the real act of speech with pre-recorded, edited and electronically perfected statements, questions, answers, stories, etc.

21:28 Posted in Cyberart, Emotional computing | Permalink | Comments (0)

The Endless Forest

12:34 Posted in Cyberart, Emotional computing | Permalink | Comments (0) | Tags: emotional computing

Jul 21, 2006

My beating heart

My Beating Heart is an haptic relaxation pillow that gently beats out a slow, steady heart-like rhythm. The $120 heart-shaped pillow uses a rumble pack-style haptic system so that you can feel the heart beat as you hold it.

From the product's website:

My Beating Heart is a soft huggable heart with a soothing heartbeat you can really feel. When hugging the heart, the tactile heartbeat reminds you of holding a pet or a loved-one. Hold the heart a moment and you'll begin to sense your own heartbeat slowly syncing with My Beating Heart's carefully designed rhythm. My Beating Heart is designed to help you relax, daydream, meditate, and nap. |

14:34 Posted in Emotional computing | Permalink | Comments (0) | Tags: emotional computing