Mar 23, 2007

Korean Startup Xtive fights Online Addiction with Subliminal Sound

Via Korea Times

Xtive, a Korean venture start-up, has developed a subliminal sound sequence, which it claims can prevent obsessive use of online games, thus giving hope to game addicts, reports The Korea Times.

From the Korea Times interview:

``We incorporated messages into an acoustic sound wave telling gamers to stop playing. The messages are told 10,000 to 20,000 times per second,’’ Xtive President Yun Yun-hae said.

``Game users can’t recognize the sounds. But their subconscious is aware of them and the chances are high they will quit playing,’’ the 35-year-old Yun said. ``Tests tell us the sounds work.’’

Xtive, which was established in 2005, spent about a year to create the sound sequence geared toward addressing the concern that Korean teenagers spend too much time playing computer games.

The addiction to the network games has turned into a serious social problem and some gamers have even died after long sessions in front of the computer.

Experts point out roughly 10 to 20 percent of high school students can be categorized as Web junkies who need treatment. And many believe that is a conservative perspective.

``Experiences tell us kids or adolescents simply don’t stop playing games when faced with forceful measures. Such attempts can also cause many side effects,’’ Yun said.

``But our newly developed sound sequence tells them to stop playing on their own. We think this can make a real difference in the war against obsessive game play,’’ he said.

Yun said Xtive plans to commercialize the phonogram along with the government and game companies.

``Game companies can install a system, which delivers the inaudible sounds after it recognizes a young user has kept playing after a preset period of time,’’ Yun said.

Xtive applied for a domestic patent for the phonogram and is looking to take advantage of the technology in other sectors.

``We can easily change the messages. In this sense, the potential for this technology is exponential,’’ Yun said.

10:30 Posted in Persuasive technology | Permalink | Comments (0) | Tags: game addiction

Mar 19, 2007

An Interview with Virtual Reality Pioneer Jaron Lanier

Human Productivity Lab blog has a very interesting interview with Jaron Lanier about the reasons why virtual reality technology has not yet become commonplace.

Here is an excerpt from the interview:

JARON LANIER: Well, first of all, I personally think that a lot more could have happened with Virtual Reality than has happened. I feel that what went wrong with VR was that decent software standard platform didn't happen. The ones that were most in the forefront like VRML just didn't work well enough. So to get back to your question: what were people looking for? I still believe that what people really want from VR is to be able to touch upon the feeling of being able to share a dream with someone else - to take a little step away from the sense of isolation that people feel today. I think this is a universal and very healthy desire. (VR isn't the only way to address it obviously.)

But in VR, at some point, you would be able to be inside this place with other people where you were making it up as you went along. What people really wanted was a kind of intimacy where you're making up a dream together with other people. You're all experiencing it. I was calling it post-symbolic communication. The basic idea is that people thought that with VR they would be able to experience a kind of intense contact with imagination, some sort of fusion of the kind of extremes of aesthetics and emotional experience you might have when you open up the constraints of reality.

You can divide the requirements of the technology that will give you that into two pieces. You can call one piece the production quality or production standards - how detailed is the resolution? How realistic do surfaces look? That boils down to fast computers, high quality sensors and displays: the tech underpinnings of it all. But then there's this other side; the software side, which involves how you can get a virtual world to do things. My feeling is that even a low-res virtual world can get people the kind of experience that I was just describing. And I think we did have some great moments and great experiences in the `80s, even with very low- res systems that were available then. I think that the failure since then is that the software that's been developed is very rigid.

There are a couple of reasons for this. One was that there was a bizarre alliance between people doing military simulation and people doing recreational gaming. There are a lot of

different kinds of games, so I don't want to put them all under one critical tent here. I think a lot of them are OK. But one of the dominant ideas is that a person who is playing is capable of being in the location, moving, shooting, or dying [laughs]. That's pretty much it. You might pick up an amulet or something, but it doesn't give you a lot to do.

00:45 Posted in Virtual worlds | Permalink | Comments (0) | Tags: virtual worlds

A Brain On/Off Switch

Re-blogged from Medgadget

Researchers at the Stanford Medical Center developed a procedure that allows scientists turn selected parts of the brain on and off. The tool may be used as a treatment option for people with different psychiatric problems.

From the MIT Technology Review report:

While scientists know something about the chemical imbalances underlying depression, it's still unclear exactly which cells, or networks of cells, are responsible for it. In order to identify the circuits involved in such diseases, scientists must be able to turn neurons on and off. Standard methods, such as electrodes that activate neurons with jolts of electricity, are not precise enough for this task, so Deisseroth, postdoc Ed Boyden (now an assistant professor at MIT; see "Engineering the Brain"), and graduate student Feng Zhang developed a neural controller that can activate specific sets of neurons.They adapted a protein from a green alga to act as an "on switch" that neurons can be genetically engineered to produce (see "Artificially Firing Neurons," TR35, September/October 2006). When the neuron is exposed to light, the protein triggers electrical activity within the cell that spreads to the next neuron in the circuit. Researchers can thus use light to activate certain neurons and look for specific responses--a twitch of a muscle, increased energy, or a wave of activity in a different part of the brain.

Deisseroth is using this genetic light switch to study the biological basis of depression. Working with a group of rats that show symptoms similar to those seen in depressed humans, researchers in his lab have inserted the switch into neurons in different brain areas implicated in depression. They then use an optical fiber to shine light onto those cells, looking for activity patterns that alleviate the symptoms. Deisseroth says the findings should help scientists develop better antidepressants: if they know exactly which cells to target, they can look for molecules or delivery systems that affect only those cells. "Prozac goes to all the circuits in the brain, rather than just the relevant ones," he says. "That's part of the reason it has so many side effects."

00:29 Posted in Neurotechnology & neuroinformatics | Permalink | Comments (0) | Tags: neurotechnology

MindFit Clinical Trial Results Announced

Against conventional wisdom, the computer training in MindFit(tm) cognitive skill assessment and training software, created by CogniFit, Ltd. http://www.cognifit.com), was found to improve short-term memory, spatial relations and attention focus--all skills used in driving and other daily activities that maintain our independence as we age.

The trial was conducted at the Tel-Aviv Sourasky Medical Center of Tel-Aviv University in Israel, where researchers are taking a leading role in the study of age-related disorders. During the two-year clinical trial, doctors conducted a prospective, randomized, double-blind study with active comparators of 121 self-referred volunteer participants age 50 and older. Each study participant was randomly assigned to spend 30 minutes, three times a week during the course of three months at home, using either MindFit or sophisticated computer games.

While all study participants benefited from the use of computer games, MindFit users experienced greater improvement in the cognitive domains of spatial short term memory, visuo-spatial learning and focused attention. Additionally, MindFit users in the study with lower baseline cognitive performance gained more than those with normal cognition, showing the potential therapeutic effect of home-based computer training software in those already suffering the effects of aging or more serious diseases.

"These research findings show unequivocally that MindFit, which requires no previous computer experience of users, keeps minds sharper than other computer games and software can," said Prof. Shlomo Breznitz, Ph.D., founder and president of CogniFit. "In fact, the same cognitive domains that MindFit keeps sharp are also central in most daily activities-including driving-that enable aging independently."

Breznitz continued, "These findings support CogniFit's belief that if you exercise your brain just as you do your muscles, you can build the speed and accuracy of your mental functions, significantly. 'Working out' with MindFit three times a week from the comfort of your home will yield similar results for your brain as exercising at the gym with that same frequency does for your muscles."

"We are additionally encouraged by the implications of our findings for those already below baseline in cognitive performance," said Nir Giladi, M.D., principal investigator, senior neurologist for the Department of Neurology for Tel-Aviv University's Tel-Aviv Sourasky Medical Center and faculty member in Tel-Aviv University's Sackler School of Medicine. "In the future, we may research MindFit's effect on Alzheimer's disease and forms of dementia."

MindFit software helps to assess and build overall cognitive skills for baby boomers, seniors and people of all ages. In other research studies, MindFit has helped users to improve their short-term memory by 18 percent. The comprehensive cognitive training program assesses, trains and enhances cognitive skills--including memory, focus, learning and concentration and safeguards overall cognitive vitality, an overall concept patented by CogniFit.

After an initial assessment session, users are encouraged to train with the software on their home PCs three times a week for 20 minutes a day. Then, MindFit provides fun, individualized training to match users' unique cognitive skill sets, changing exercises and levels to suit each individual's unique needs. No other cognitive assessment or training software product on the market has that personally tailoring technology.

00:22 Posted in Brain training & cognitive enhancement | Permalink | Comments (0) | Tags: serious games

Mar 17, 2007

Pigeonbots

From Practical Neurotechnology

Scientists at the Robot Engineering Technology Research Center of east China's Shandong University of Science and Technology claim to have implanted micro electrodes in the brain of a pigeon so they can command it to fly right or left or up or down.

The implants stimulated different areas of the pigeon's brain according to signals sent by the scientists via computer, and forced the bird to comply with their commands.

http://blog.wired.com/defense/2007/02/cyborg_flying_r.html

http://tenementpalm.blogspot.com/2007/02/psb-buys-tiny-ge...

http://english.people.com.cn/200702/27/eng20070227_352761...

14:51 Posted in Pervasive computing | Permalink | Comments (0) | Tags: brain-computer interface

EEG neurofeedback for cognitive enhancement in the elderly

EEG neurofeedback: a brief overview and an example of peak alpha frequency training for cognitive enhancement in the elderly.

Clin Neuropsychol. 2007 Jan;21(1):110-29

Authors: Angelakis E, Stathopoulou S, Frymiare JL, Green DL, Lubar JF, Kounios J

Neurofeedback (NF) is an electroencephalographic (EEG) biofeedback technique for training individuals to alter their brain activity via operant conditioning. Research has shown that NF helps reduce symptoms of several neurological and psychiatric disorders, with ongoing research currently investigating applications to other disorders and to the enhancement of non-disordered cognition. The present article briefly reviews the fundamentals and current status of NF therapy and research and illustrates the basic approach with an interim report on a pilot study aimed at developing a new NF protocol for improving cognitive function in the elderly. EEG peak alpha frequency (PAF) has been shown to correlate positively with cognitive performance and to correlate negatively with age after childhood. The present pilot study used a double-blind controlled design to investigate whether training older individuals to increase PAF would result in improved cognitive performance. The results suggested that PAF NF improved cognitive processing speed and executive function, but that it had no clear effect on memory. In sum, the results suggest that the PAF NF protocol is a promising technique for improving selected cognitive functions.

14:47 Posted in Biofeedback & neurofeedback | Permalink | Comments (0) | Tags: biofeedback, neurofeedback

CFP: "Online Communities and People with Disabilities" Special Issue of TACCESS

Via Usability News

Call for papers: Special Issue of ACM Transactions on Accessible Computing (TACCESS) on "Online Communities and People with Disabilities"

Guest Editors: Panayiotis Zaphiris & Ulrike Pfeil

Centre for HCI Design

City University London

The term 'online community' is generally used to refer to people who meet and communicate in an online environment. Rather than physical proximity, researchers use the nature and strength of relationships among the members to determine the characteristics of an online community. Online communities are formed around similar interests (for example discussions around a disability) of the members. People who have similar needs or experiences meet in online communities in order to exchange valuable resources and/or to engage in social support. An increasing number of people spend time in online communities to make friends, develop relationships, and exchange emotional support. Social interaction online can especially be beneficial for people with special needs (and for older people) as it allows them to stay in contact with family and friends despite the disability or time-constraints.

The emphasis for TACCESS publications is placed on experimental results, although strong papers presenting new theoretical insights or positions are also given consideration. Additional information for prospective authors can be found at: http://www.is.umbc.edu/taccess/authors.html

THEMES

Contributions from both the academic community and industry are most welcomed. Potential topics include (but are not limited to) the following:

*Design approaches and techniques suitable for empathic online communities

* Usability and accessibility studies regarding online communities for people with disabilities

* Theoretical foundations for analysing empathic online communities

* Social and Cultural Issues of online communities for people with disabilities

* New methods and techniques (eg Social Network Analysis)

* Ethical issues to be considered when studying online communities for people with disabilities

* The potential of 3D Virtual Worlds for such communities

SUBMISSION PROCESS

Prospective authors should as soon as possible (but before 15th of May 2007) submit a tentative title and a short abstract (maximum 150 words) to Panayiotis Zaphiris (zaphiri@soi.city.ac.uk). Authors of abstracts that are judged to fit the themes of the special issue will be promptly invited to submit a full paper. Full paper should follow the journal�s suggested writing format viewable at http://www.is.umbc.edu/taccess/authors.html and should be submitted directly to the editors of this special issue (zaphiri@soi.city.ac.uk)

Important dates:

o Abstract submission: By 15th of May 2007

o Full paper submission: 15th July 2007

o Response to authors: 25th September 2007

o Final version of papers: 25th October 2007

14:45 Posted in Call for papers | Permalink | Comments (0) | Tags: accessibility

Mar 16, 2007

The Amygdaloids

rom the Amygdaloids website

What do you get when you mix the son of a Louisiana butcher, a dome builder, a philosophy major, and an ex-Israeli solider? NYU scientists who play rock n' roll, of course. Joseph LeDoux, Daniela Schiller, and Nina Galbraith Curely are neuroscientists who study emotion and memory functions of the brain, and Tyler Volk is an environmental scientist who has also written about mind and brain. Their original songs are about mental life and mental disorders (Mind-Body Problem, An Emotional Brain, If You Want Your Brain to Last). They also cover other "heavy mental" tunes like Manic Depression, 8 Miles High, and 19th Nervous Breakdown. When all else fails, they slip into the blues for. Newsday, not surprisingly, describes The Amygdaloids as "Heavy Mental."

22:50 | Permalink | Comments (0)

Egocentric depth judgments in optical, see-through augmented reality

Egocentric depth judgments in optical, see-through augmented reality.

IEEE Trans Vis Comput Graph. 2007 May-Jun;13(3):429-42

Authors: Swan Ii JE, Jones A, Kolstad E, Livingston MA, Smallman HS

Abstract-A fundamental problem in optical, see-through augmented reality (AR) is characterizing how it affects the perception of spatial layout and depth. This problem is important because AR system developers need to both place graphics in arbitrary spatial relationships with real-world objects, and to know that users will perceive them in the same relationships. Furthermore, AR makes possible enhanced perceptual techniques that have no real-world equivalent, such as x-ray vision, where AR users are supposed to perceive graphics as being located behind opaque surfaces. This paper reviews and discusses protocols for measuring egocentric depth judgments in both virtual and augmented environments, and discusses the well-known problem of depth underestimation in virtual environments. It then describes two experiments that measured egocentric depth judgments in AR. Experiment I used a perceptual matching protocol to measure AR depth judgments at medium and far-field distances of 5 to 45 meters. The experiment studied the effects of upper versus lower visual field location, the x-ray vision condition, and practice on the task. The experimental findings include evidence for a switch in bias, from underestimating to overestimating the distance of AR-presented graphics, at sim23 meters, as well as a quantification of how much more difficult the x-ray vision condition makes the task. Experiment II used blind walking and verbal report protocols to measure AR depth judgments at distances of 3 to 7 meters. The experiment examined real-world objects, real-world objects seen through the AR display, virtual objects, and combined real and virtual objects. The results give evidence that the egocentric depth of AR objects is underestimated at these distances, but to a lesser degree than has previously been found for most virtual reality environments. The results are consistent with previous studies that have implicated a restricted field-of-view, combined with an inability for observers to scan the ground plane in a near-to-far direction, as explanations for the observed depth underestimation.

22:24 Posted in Virtual worlds | Permalink | Comments (0) | Tags: virtual reality, augmented reality, mixed reality

Snout Performance & Public Forum

From Urban Tapestries

__________________________________________________

Venue: Cargo, 83 Rivington St, Kingsland Viaduct, London, EC2A 3AY

The performance will start at Cargo and the route will include Hoxton

Square and Hoxton Market

Dates/Times: Tuesday 10 April, Performance 10am, Conference 1.30-5pm.

Tube: Old St, Liverpool St

Admission: Free

Access: Limited, please call in advance for details

Information: +44 (0)20 7729 9616, www.iniva.org, institute@iniva.org

Supported by Arts Council England & Esmée Fairbairn

Download the eFlyer

__________________________________________________

With increasing concerns about climate change, individuals and communities are looking for new ways to take action and make a real and lasting impact.

In the Snout 'carnival' performance and public forum, artists, producers, performers and computer programmers demonstrate how to create wearable technologies from scavenged media, in order to map the invisible gases that affect our everyday environment. The project by inIVA, Proboscis and researchers from Birkbeck College also explores how communities can use this visual evidence to participate in or initiate local action.

The performance will show in action two prototype Snout sensor 'wearables' based on traditional carnival costumes. Carnival is a time of suspension of the normal activities of everyday life - a time when the fool becomes king for a day, when social hierarchies are inverted, a time when everyone is equal. Snout proposes 'participatory sensing' as a lively addition to the popular artform of carnival costume design, engaging the community in an investigation of its own environment, something usually done by local authorities and state agencies.

A public forum on 'participatory sensing and media scavenging' will be held after the performance. This will demonstrate the Snout wearables, discuss evidence collecting for environmental action and how communities can reflect on the personal impact of pollution and the environment. The forum, led by Giles Lane (Proboscis) and Dr George Roussos (Birkbeck) will look at 'participatory sensing' as a form of social engagement. The forum will share tactics on how to 'scavenge' free online services and resources, as well as exploring the relationship between information, aesthetics and design and how to make these ideas and issues accessible to more people.

Snout is a new collaboration between inIVA, Proboscis and researchers from Birkbeck College exploring relationships between the body, community and the environment. It builds on a previous collaboration Feral Robots (with Natalie Jeremijenko) to investigate how data can be collected from environmental sensors as part of popular social and cultural activities.

22:22 Posted in Wearable & mobile | Permalink | Comments (0) | Tags: wearable

Methods of Understanding and Designing For Mobile Communities

have a look at this PhD thesis by Jeff Axup - lot of interesting stuff about mobile research methods...

have a look at this PhD thesis by Jeff Axup - lot of interesting stuff about mobile research methods...

Download the entire thesis here:

Methods of Understanding and Designing For Mobile Communities

Major research outcomes presented in this thesis lie in three areas: 1) methods, 2) technology designs, and 3) backpacker culture. Five studies of backpacker behaviour and requirements form the core of the research. The methods used are in-situ and exploratory, and apply both novel and existing techniques to the domain of backpackers and mobile groups.

Methods demonstrated in this research include: field trips for exploring mobile group behaviour and device usage, a social pairing exercise to explore social networks, contextual postcards to gain distributed feedback, and blog analysis which provides post-hoc diary data. Theoretical contributions include: observations on method triangulation, a taxonomy of mobility research, method templates to assist method usage, and identification of key categories leading to mobile group requirements. Design related outcomes include: 57 mobile tourism product ideas, a format for conveying product concepts, and a design for a wearable device to assist mobile researchers.

Our understanding of backpacker culture has also improved as a consequence of the research. It has also generated user requirements to aid mobile development, methods of visualising mobile groups and communities, and a listing of relevant design tensions. Additionally, the research has added to our understanding of how new technologies such as blogs, SMS and iPods are being used by backpackers and how mobile groups naturally communicate.

22:15 Posted in Wearable & mobile | Permalink | Comments (0) | Tags: mobile, wearable

Neurofeedback for Children with ADHD

Neurofeedback for Children with ADHD: A Comparison of SCP and Theta/Beta Protocols.

Appl Psychophysiol Biofeedback. 2007 Mar 14;

Authors: Leins U, Goth G, Hinterberger T, Klinger C, Rumpf N, Strehl U

Behavioral and cognitive improvements in children with ADHD have been consistently reported after neurofeedback-treatment. However, neurofeedback has not been commonly accepted as a treatment for ADHD. This study addresses previous methodological shortcomings while comparing a neurofeedback-training of Theta-Beta frequencies and training of slow cortical potentials (SCPs). The study aimed at answering (a) whether patients were able to demonstrate learning of cortical self-regulation, (b) if treatment leads to an improvement in cognition and behavior and (c) if the two experimental groups differ in cognitive and behavioral outcome variables. SCP participants were trained to produce positive and negative SCP-shifts while the Theta/Beta participants were trained to suppress Theta (4-8 Hz) while increasing Beta (12-20 Hz). Participants were blind to group assignment. Assessment included potentially confounding variables. Each group was comprised of 19 children with ADHD (aged 8-13 years). The treatment procedure consisted of three phases of 10 sessions each. Both groups were able to intentionally regulate cortical activity and improved in attention and IQ. Parents and teachers reported significant behavioral and cognitive improvements. Clinical effects for both groups remained stable six months after treatment. Groups did not differ in behavioural or cognitive outcome.

21:48 Posted in Biofeedback & neurofeedback | Permalink | Comments (0) | Tags: biofeedback, neurofeedback

Socially assistive robotics for post-stroke rehabilitation

Journal of NeuroEngineering and Rehabilitation

Maja J Matari

Background: Although there is a great deal of success in rehabilitative robotics applied to patient recovery post stroke, most of the research to date has dealt with providing physical assistance. However, new rehabilitation studies support the theory that not all therapy need be hands-on. We describe a new area, called socially assistive robotics, that focuses on non-contact patient/user assistance. We demonstrate the approach with an implemented and tested post-stroke recovery robot and discuss its potential for effectiveness. Results: We describe a pilot study involving an autonomous assistive mobile robot that aids stroke patient rehabilitation by providing monitoring, encouragement, and reminders. The robot navigates autonomously, monitors the patient's arm activity, and helps the patient remember to follow a rehabilitation program. We also show preliminary results from a follow-up study that focused on the role of robot physical embodiment in a rehabilitation context. Conclusion: We outline and discuss future experimental designs and factors toward the development of effective socially assistive post-stroke rehabilitation robots.

21:46 Posted in AI & robotics | Permalink | Comments (0) | Tags: robotics, artificial intelligence

Robot/computer-assisted motivating systems for personalized, home-based, stroke rehabilitation

21:45 Posted in AI & robotics | Permalink | Comments (0) | Tags: robotics, artificial intelligence

New eye-tracking system analyzes the interest level of TV viewers

21:41 Posted in Research tools | Permalink | Comments (0) | Tags: eye-tracking

VR and museums: call for papers

Deadline: Friday April 27, 2007 :: Contributions are welcomed for a new book addressing the construction and interpretation of virtual artefacts within virtual world museums and within physical museum spaces. Particular emphasis is placed on theories of spatiality and strategies of interpretation.

The editors seek papers that intervene in critical discourses surrounding virtual reality and virtual artefacts, to explore the rapidly changing temporal, spatial and theoretical boundaries of contemporary museum display practice. We are especially interested in spatiality as it is employed in the construction of virtual artefacts, as well as the roles these spaces enact as signifiers of historical narrative and sites of social interaction.

We are also interested in the relationship between real-world museums and virtual world museums, with a view to interrogating the construction of meaning within, across and between both. We welcome original scholarly contributions on the topic of new cultural practices and communities related to virtual reality in the context of museum display practice. Papers might address, but are in no way limited to, the following:

* Authenticity and artificiality

* Exploration and discovery

* Physical vs virtual

* Representation/interpretation of virtual reality artefacts - as 3D spaces on screen or in a physical gallery

* Museum visiting in virtual space

* Representation of physical museum spaces in virtual worlds and their relationship to cultural definitions of museum spaces.

Please send a proposal of 500-750 words and a contributor's bio by Friday

April 27, 2007. Authors will be notified by Thursday May 31, 2007. Final drafts of papers are due by Monday October 1, 2007.

Please send your proposal to:

Tara Chittenden

Room 201

Strategic Research Unit

113 Chancery Lane

London WC2A 1PL

Or via email: tara.chittenden[at]lawsociety.org.uk

21:36 Posted in Virtual worlds | Permalink | Comments (0) | Tags: virtual reality, augmented reality, mixed reality

Mediamatic workshop

Mediamatic organizes a new workshop--Hybrid World Lab--in which the participants develop prototypes for hybrid world media applications. Where the virtual world and the physical world used to be quite separated realms of reality, they are quickly becoming two faces of the same hybrid coin. This workshop investigates the increasingly intimate fusion of digital and physical space from the perspective of a media maker.

The workshop is an intense process in which the participants explore the possibilities of the physical world as interface to online media: location based media, everyday objects as media interfaces, urban screens, and cultural application of RFID technology. Every morning lectures and lessons bring in new perspectives, project presentations and introductions to the hands-on workshop tools. Every afternoon the participants work on their own workshop projects. In 5 workshop days every participant will develop a prototype of a hybrid world media project, assisted by outstanding international trainers and lectures and technical assistants. The workshop closes with a public presentation in which the issues are discussed and the results are shown.Topics: Some of the topics that will be investigated in this workshop are: Cultural application and impact of RFID technology, internet-of-things. Using RFID in combination with other kinds of sensors. Ubiquitous computing (ubicomp) and ambient intelligence: services and applications that use chips embedded in household appliances and in public space. Locative media tools, car navigation systems, GPS tools, location sensitive mobile phones. The web as interface to the physical world: geotagging and mashupswith Google Maps & Google Earth. Games in hybrid space.

21:33 Posted in Augmented/mixed reality | Permalink | Comments (0) | Tags: virtual reality, augmented reality, mixed reality

Mar 15, 2007

A Massively Shared Virtual World: Solipsis

Solipsis is a pure peer-to-peer system for a massively shared virtual world. There are no central servers at all: it only relies on end-users' machines. Solipsis is a public virtual territory. The world is initially empty and only users will fill it by creating and running entities. No pre-existing cities, inhabitants nor scenario to respect...Solipsis is open-source, so everybody can enhance the protocols and the algorithms. Moreover, the system architecture clearly separates the different tasks, so that peer-to-peer hackers as well as multimedia geeks can find a good place to have fun here! Current versions of Solipsis give the opportunity to act as pionneers in a pre-cambrian world. You only have a 2D representation of the virtual world and some basic tools devoted to communications and interactions

00:58 | Permalink | Comments (0) | Tags: virtual reality

NeuroZappingFolks

LX 2.0: Contemporary Online Experiments: NeuroZappingFolks is a non-linear zapping through the Internet, a path leading to the inside of a web of relations, a web that can be explored from one tag to a site, to another tag, to another site... from word to image to word to image. NeuroZappingFolks is then the simulation of a brain lost in the web (lost between servers, but also lost in Internet's double identity: word and image).

00:54 Posted in Cyberart, Research tools | Permalink | Comments (0) | Tags: cyberart

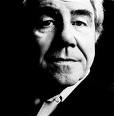

French philosopher Jean Baudrillard dies

(Associated Press)

March 6, 2007, PARIS

Jean Baudrillard, a French philosopher and social theorist known for his provocative commentaries on consumerism, excess and what he said was the disappearance of reality, died Tuesday, his publishing house said. He was 77.

Baudrillard died at his home in Paris after a long illness, said Michel Delorme, of the Galilee publishing house. The two men had worked together since 1977, when "Oublier Foucault" (Forget Foucault) was published, one of about 30 books by Baudrillard, Delorme said by telephone.

Among his last published books was "Cool Memories V," in 2005. Baudrillard, a sociologist by training, is perhaps best known for his concepts of "hyperreality" and "simulation."

00:50 | Permalink | Comments (0)